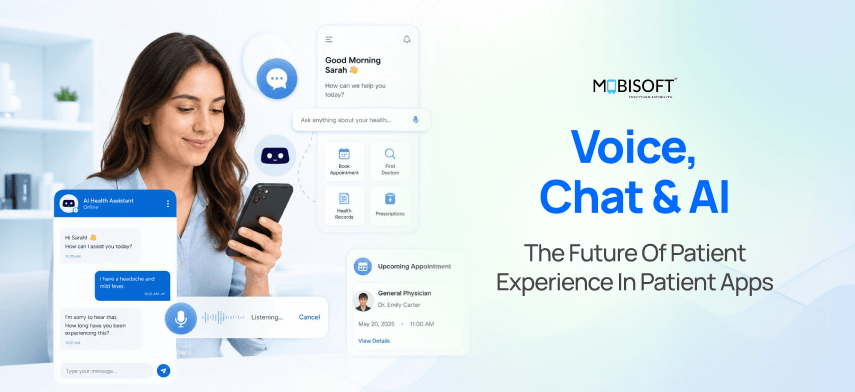

Most patients do not care about the technology behind their healthcare app. They care about one thing: did it actually help them?

That is the standard voice AI patient apps are now being held to. And increasingly, they are meeting it.

Think about what has changed. 1 in 3 American adults turned to an AI chatbot for health information in the past year. Tampa General Hospital cut patient wait times by 58% within two weeks of deploying a voice AI agent. Physicians using ambient AI clinical documentation tools are reclaiming 30 minutes every single day.

This is not a trend on the horizon. It is already in production.

This guide walks through what is actually working, what the evidence says, where the risks are, and how to build AI patient engagement the right way.

What This Guide Covers

- The Convergence: Why Voice, Chat, and AI Are Arriving Together in Patient Apps

- The Market Reality: Numbers, Adoption Curves, and Who Is Already Ahead

- The 10 Live Use Cases: Evidence, Deployments, Limitations, and Builder Notes

- The Technology Layer: NLP, LLMs, Voice Models, and How They Fit Together

- The Patient Experience Dimension: What Patients Actually Want from AI Health Apps

- Ambient AI and the Clinical Documentation Revolution

- The Reliability Problem: LLMs in Healthcare and the Accuracy Gap

- The Regulatory Landscape: FDA January 2026 Update, EU MDR, and State Laws

- Accessibility and Equity: Voice AI for Patients Who Need It Most

- The Implementation Framework: How to Build This Right

- The Next Five Years: Where the Technology Is Going

- Mobisoft Infotech: Building AI-Native Patient Apps

The Convergence: Why Voice, Chat, and AI Are Arriving Together

For years, healthcare technology moved in separate lanes. Voice recognition was one product. Chatbots were another. Clinical AI was something else entirely. None of them talked to each other particularly well.

That changed around 2025.

Three technologies matured at roughly the same time:

- Voice models accurate enough to handle medical terminology and accented speech

- Large language models capable of genuine clinical reasoning

- NLP healthcare frameworks that make conversations feel less like querying a search engine and more like talking to someone who actually knows medicine

The result is something qualitatively different from anything that came before. A chat AI health app that understands what a patient means, not just what they typed. A voice AI healthcare tool that can navigate a complex medication question without putting anyone on hold.

Here is why the timing matters. Each technology made the others better:

- Better voice recognition meant patients could speak naturally, giving LLMs richer input to reason with

- Better LLMs meant chat interfaces could handle real clinical questions, not just appointment booking

- Better conversational design meant patients actually used these tools instead of abandoning them after one session

Healthcare has historically been slow to adopt. As of early 2025, only 19% of US medical practices used any form of chatbot or virtual assistant for patient communication. That number is moving fast now. The gap between early movers and late adopters is already widening.

The practices building conversational AI healthcare 2026 capabilities today are not just adding a feature. They are establishing an infrastructure advantage that compounds over time. Organisations investing in healthcare mobile app development that embeds voice and conversational AI from the ground up are building on a foundation that will be very difficult for late movers to replicate.

The Market Reality: Numbers, Adoption Curves, and Who Is Already Ahead

The numbers here are worth sitting with for a moment.

The global conversational AI market in healthcare was valued at USD 13.68 billion in 2024 and is projected to reach USD 106.67 billion by 2033. The voice AI healthcare segment alone is forecast to grow at a 37.85% CAGR through 2035. That is the highest growth rate of any healthcare AI sub-segment right now.

These are not speculative projections. They are being pulled forward by real deployments with published outcomes.

Who Is Already Seeing Results?

Tampa General Hospital deployed voice AI agents in late 2025 and saw a 58% reduction in patient wait times within two weeks. UW Health ran a randomised trial on ambient AI clinical documentation and found 30 minutes saved per provider per day, alongside measurable burnout reduction. Apollo Hospitals reported a 46% jump in provider productivity after deploying voice AI for patient interactions.

On the patient side, 1 in 3 American adults turned to an AI chatbot for health information in the past year. The adoption curve is steep and accelerating.

What Do These Signals Mean for Builders

AI patient engagement applications account for the largest share of the entire conversational AI healthcare market in 2026. This is not a niche feature. It is where the market is concentrating.

The organisations that have not yet moved are not safe by standing still. The gap between first movers and everyone else is actively compounding.

The 10 Live Use Cases: Evidence, Deployments, and What Builders Must Get Right

This is where the rubber meets the road. Each use case below has live deployments, real outcome data, and at least one thing builders consistently get wrong.

Conversational Appointment Scheduling

No hold queues. No IVR menus. Patients describe what they need in plain language, and the system books, reschedules, or cancels in real time.

Tampa General's voice AI agent handled appointment scheduling as its first rollout function, producing a 58% reduction in wait times and a 21% increase in scheduled appointments within two weeks. Voice bots for appointment confirmations also reduce no-show rates by up to 25%.

The builder mistake here is almost always EHR integration. A scheduling AI that cannot pull real-time slot availability from the source system is not a scheduling AI. It is a very expensive voicemail.

Voice-Based Symptom Triage and Care Navigation

Patients describe symptoms conversationally. The AI assesses urgency and recommends the right care pathway, whether that is self-care, a telehealth appointment, urgent care, or emergency services.

Boston Children's Hospital deployed Buoy Health for AI-driven triage with clinical-grade question flows. A BMC Health Services Research study found that AI-assisted triage in a paediatric hospital reduced median waiting time from nearly two hours to under 25 minutes.

The critical builder note: voice AI triage systems need a mandatory escalation pathway for anything flagged as potentially serious. The FDA considers symptom checkers that make specific diagnostic recommendations as Software as a Medical Device. Build accordingly.

Ambient AI Clinical Documentation

This one does not involve the patient directly. But it may do more for patient experience in healthcare than any tool the patient actually touches.

Ambient AI clinical documentation systems passively listen to patient-clinician conversations and auto-generate structured clinical notes. The clinician stops typing mid-conversation. They look up. They actually listen.

The UW Health randomised trial published in NEJM AI found 30 minutes saved per provider per day and a clinically meaningful reduction in burnout scores. Over 800 physicians at UW Health now use the technology across Wisconsin and Illinois.

The builder note: patient consent before recording is non-negotiable. Build it into the start of every clinical encounter, not buried in an EHR consent form nobody reads.

Conversational Medication Management

AI chat and voice AI patient apps send medication reminders, answer side effect questions, process refill requests, and flag non-adherence to the care team. All through natural conversation, not form-filling.

This is the highest-ROI voice application for chronic care engagement. The builder's mistake is designing the reminder as a one-way alert. When a patient says, "I stopped taking it because of the nausea," the system needs to route that response to a pharmacist or clinician.

One hard boundary: controlled substance refills require DEA-compliant pharmacist verification. You cannot and should not automate that step in the voice AI workflows.

Mental Health and Wellbeing Chat Support

Wysa has logged over 400 million conversations globally. Woebot, Ada Health, and Infermedica are all in production across multiple markets. The chat AI health app category for mental health is one of the fastest-growing in digital health.

The evidence is nuanced, though. A 2026 meta-analysis found 92% initial uptake for mental health apps, but real-world engagement in naturalistic settings can drop as low as 2%. The gap between trial participants and the general population is wide.

Two things builders must get right here. Anonymous access by default lowers the barrier at the exact moment someone needs it most. And crisis detection without a tested human escalation pathway is not a mental health product. It is a liability. Teams approaching mental health app development in this space must treat crisis escalation design as the first requirement, not an afterthought bolted on before launch.

Patient Intake and Pre-Visit Information Collection

Conversational intake collects symptoms, history, medications, and insurance information through adaptive dialogue rather than static forms. Patients complete significantly more intake information when the format feels like a conversation.

The hidden failure mode here is a parallel data problem. Conversational intake that does not write back directly to the clinical record creates two versions of the patient. That is worse than no intake tool at all. EHR integration is not optional.

Personalised Health Education and Follow-Up

Post-visit care instructions delivered through LLM patient app interfaces that patients can actually ask questions back to. Not a PDF. Not a generic web link.

The message "Based on your appointment today, here is what Dr. Singh recommended about your diet" is more effective and safer than "Here is general information about type 2 diabetes management." LLM healthcare patient education must reference the patient's specific care plan, not generic condition content pulled from model parameters.

Multilingual Patient Communication

Multilingual AI patient engagement removes the language barrier that causes miscommunication for millions of non-English-speaking patients every year.

A paediatric health system case documented by Parloa found that families who previously struggled with English-language systems could access appointment updates and care guidance in their native language, producing better engagement and treatment compliance across diverse populations.

The builder note: translated text is not enough. Voice persona design needs to be culturally appropriate for the target community. Test with native speakers, not just bilingual staff.

Remote Patient Monitoring with Conversational Check-Ins

Instead of asking chronic disease patients to manually log data into a tracking app, voice AI patient apps conduct regular conversational check-ins. The patient describes how they feel. The AI interprets and acts.

Voice-based check-ins achieve significantly higher completion rates than text-based symptom logging for elderly patients, exactly the population carrying the highest chronic disease burden.

The non-negotiable builder requirement: any response in a pre-defined clinical concern category, chest pain, respiratory distress, or blood glucose above threshold, must immediately create a care team task.

Diagnostic Support and Vocal Biomarker Analysis

The most experimental use case on this list, and the one with the highest long-term potential.

Voice AI analyses the acoustic properties of a patient's voice as a biomarker for conditions including depression, heart failure, respiratory disease, and cognitive decline. A 2025 prehospital stroke assessment study found that a voice-guided AI system correctly identified 84% of individual stroke signs during prehospital assessments.

Vocal biomarker analysis remains investigational in most jurisdictions. Builders entering this space in 2026 need regulatory counsel before launch and rigorous validation across diverse demographic populations. Accuracy varies significantly by race, age, and sex. A model trained on a narrow population will produce systematically biased results on everyone outside it.

The Technology Layer: NLP, LLMs, Voice Models, and How They Fit Together

Most conversational AI patient app development projects fail not because the AI model is wrong. They fail because the architecture around it is poorly designed.

Here is how the components actually fit together.

The Five Layers Every Conversational Health AI Needs

Speech recognition (ASR) is the entry point. Tools like OpenAI Whisper, Deepgram, and Google Speech-to-Text convert patient speech to text. The healthcare-specific requirement here is medical vocabulary tuning. Standard ASR models miss medication names, anatomical terms, and clinical shorthand with surprising regularity. HIPAA-compliant deployment, either cloud or on-premise, is mandatory.

Natural language understanding (NLU) sits beneath the surface. This is what lets the system understand what a patient actually means, not just what they literally said. Clinical NLU models like BioBERT and ClinicalBERT significantly outperform general models on medical text. Fine-tuning on your specific patient population's language patterns matters more than most builders expect.

The LLM reasoning layeris where GPT healthcare models, Claude, Llama, and domain-specific options like Med-PaLM patient and MedGemma live. This is what generates contextually appropriate, medically informed responses across multi-turn conversations. Domain-specific healthcare LLMs consistently outperform general models on clinical benchmarks. John Snow Labs Healthcare LLMs currently rank first on 12 of 13 clinical benchmarks against GPT, Gemini, and Claude.

Clinical knowledge grounding is the layer most builders underinvest in. This is where validated medical databases, First Databank, Lexicomp, UpToDate, anchor LLM responses in verified clinical information. Without this layer, the LLM generates responses from its training parameters alone. That is not safe for any clinical information delivery.

Text-to-speech (TTS) closes the loop for voice interactions. ElevenLabs, OpenAI TTS, and Google WaveNet all produce natural-sounding speech. The healthcare-specific consideration is voice persona design. A voice optimized for retail customer service is not appropriate for a patient managing a chronic illness at midnight.

The Three LLM Integration Patterns

Choosing the wrong one for your use case is expensive.

RAG (Retrieval-Augmented Generation):

The LLM retrieves validated clinical content before generating a response. Best for health education, medication information, and symptom guidance. Reduces hallucination risk significantly.

Fine-Tuned Clinical LLM:

A base model trained on institution-specific clinical data. Best for EHR summarisation and population-specific care guidance. Requires significant training data and ongoing monitoring.

Agentic AI:

LLMs that do not just respond but act. Scheduling appointments, processing refill requests, and coordinating follow-up. Production-ready for administrative actions in 2026. Not yet appropriate for autonomous clinical ordering.

The One Architectural Rule That Overrides Everything Else

Every response that reaches clinical decision territory needs a human review pathway. Not as a fallback. As a design requirement. NLP healthcare systems that route around human oversight to cut operational costs are the ones that eventually produce the adverse event that costs far more than any efficiency savings.

The Patient Experience Dimension: What Patients Actually Want

Here is something the technology teams often miss.

Patients do not evaluate patient experience healthcare tools by their underlying architecture. They evaluate them by one question: did this actually help me, or did it waste my time?

The patient calling at 11 pm about a new symptom is not impressed by the model powering the chatbot. They want a direct answer. The patient managing type 2 diabetes does not want another symptom logging form. They want to describe how they feel and have someone, or something, actually respond to that.

These are not exotic demands. They are basic human expectations that the best voice AI patient apps are now meeting.

What The Research Shows

The JD Power 2026 Healthcare Digital Experience Study identified five satisfaction drivers for digital health tools in order: visual appeal, navigation, information quality, speed, and access to human clinicians.

In a voice and chat context, those five translate directly to:

- Does the AI communicate clearly and naturally

- Does it understand the actual question being asked

- Is the health information it provides trustworthy

- Does it respond without frustrating delays

- Can the patient reach a real person when needed

That last point is the one most AI chatbot patient apps underdeliver on. And it is the one patients notice most when it is missing.

What Patients Actually Need From AI Health Conversations

Immediate Value

Answer the question first. A patient asking about metformin side effects wants a clear, contextual answer in the first response. Not three clarifying questions before anything useful is said.

Emotional Acknowledgement

A patient describing a new symptom at 2 am is anxious. The AI that opens with something that recognises that anxiety before launching into clinical questions is doing patient-centred design. The one that jumps straight to "please describe your symptom in detail" is not.

Transparent AI Identity

Patients need to know they are talking to an AI. This is not just an ethical requirement. Patients who discover mid-conversation that they were talking to an undisclosed AI feel deceived. That trust, once broken, does not come back.

Persistent Memory

A patient who described their knee pain yesterday should not have to start from scratch today. AI patient engagement tools that carry context across sessions feel like continuity of care. Those who reset every interaction feel like a broken system.

A Visible Escalation Pathway

Every chat AI health app needs an obvious, easy route to a human clinician. This is the element builders most consistently deprioritise. It is also the one whose absence creates the most clinical and legal exposure.

The patient population that most needs well-designed voice AI healthcare tools is not the healthy 30-year-old who navigates apps easily. It is the 68-year-old managing three chronic conditions, the rural patient whose nearest specialist is 90 minutes away, and the non-English speaker who has historically avoided digital health entirely because every tool assumed fluency they did not have.

Design for those patients. The rest will follow.

Ambient AI and the Clinical Documentation Revolution

Of all the voice AI applications in healthcare right now, ambient AI clinical documentation has the strongest evidence base and the widest enterprise deployment. It also has the most counterintuitive patient experience argument.

The technology is invisible to the patient. And that is exactly the point.

The Problem It Is Solving

Physicians currently spend up to twice as much time on EHR tasks as on direct patient care. A significant share of primary care physicians regularly complete documentation after clinic hours, sometimes past midnight.

When a clinician is typing notes during a consultation, they are not making eye contact. They are not picking up non-verbal cues. They are not fully present. Patients notice this, even when they cannot name it.

The ambient scribe sits passively in the consultation room, with patient consent, and listens. It generates structured clinical notes, assessment summaries, and care plan documentation automatically. The clinician reviews and signs. No typing mid-conversation. No documentation backlog at the end of a twelve-hour shift.

What The Evidence Actually Shows

The UW Health randomised trial published in NEJM AI is the most rigorous study to date. It found 30 minutes saved per provider per day and a clinically meaningful reduction in burnout scores across ambulatory clinics in two states. Following the trial, UW Health rolled out the ambient scribe technology to over 800 physicians across Wisconsin and Illinois.

Physicians using DAX Copilot report being able to maintain eye contact, engage more fully with patient concerns, and finish consultations feeling less rushed. That directly affects patient-reported satisfaction scores, even though the patient never interacts with the AI directly.

The Builder Requirement Most Teams Miss

Ambient recording consent must happen before recording starts. Not in a buried EHR consent form. Not assumed from a general treatment consent. Explicitly, at the start of the encounter, in plain language, the patient understands.

The AMA published detailed ethical guidance on ambient AI documentation consent in November 2025. Read it before building.

The insight that should guide every healthcare AI investment decision is this: sometimes the most powerful improvement to patient experience healthcare is a clinician-facing tool that removes a burden. The patient never sees the ambient AI clinical documentation system. They just get a doctor who is actually listening.

The Reliability Problem: LLMs in Healthcare and the Accuracy Gap

This section is the most important one for anyone building LLM healthcare patient tools. It requires some intellectual honesty.

LLMs are genuinely impressive on medical benchmarks. GPT-4, Llama 3, and Command R+ all exceed the passing threshold for USMLE Step 3, the most advanced US clinical licensing examination. Med-PaLM patient performance matches expert clinicians on medical question answering benchmarks. These are real achievements.

They are also genuinely misleading as indicators of real-world clinical performance.

The Study Every Builder Needs to Read

A randomised controlled trial published in Nature Medicine in February 2026 tested 1,298 participants across ten medical scenarios using GPT-4o, Llama 3, and Command R+.

The findings:

- LLMs tested alone correctly identified medical conditions in 94.9% of cases

- When real patients used those same LLMs, correct identification fell to below 34.5%

- That is no better than the unassisted control group

Nearly a 60-point accuracy drop. Not because the model got worse. Because real patients interact with AI differently than controlled test conditions assume.

Why The Gap Is So Wide?

Patients do not describe symptoms the way medical examinations present them. They use colloquial language. They bury the clinically important detail inside a longer story. They have health literacy limitations that prevent them from recognising which symptom the AI is asking about.

They also do not follow conversation flows as designed. They answer partially, change direction mid-conversation, and interpret AI responses through their existing beliefs, selecting information that confirms what they already think.

This is not a model failure. It is an interaction design failure. And it is entirely solvable with the right architecture.

How To Engineer Around It

- Use RAG architecture that grounds every response in validated clinical content, not LLM parameters alone

- Build confidence scoring into the system. If model confidence drops below a defined threshold, route to a human clinician

- Cite sources for every clinical claim the AI makes

- Mandate human review for any response that enters clinical decision territory

- Run regular accuracy audits against clinical ground truth in your specific patient population

The Three Things Builders Must Never Do

Deploy an LLM patient app as a standalone diagnostic interface without clinical validation and a human escalation pathway.

Assume that strong performance on LLM accuracy healthcare apps benchmarks predicts real-world performance on your patient population. It does not.

Design the system to make it harder for patients to reach a human clinician in order to cut operational costs. Every system that does this eventually produces a preventable adverse event. The cost of that event exceeds any efficiency savings the AI produced.

The decision of whether to build or buy sits underneath all three of these failure modes. Custom AI chatbot development gives healthcare organisations full ownership of the reasoning stack, the escalation logic, and the clinical guardrails, the exact layers that off-the-shelf platforms consistently abstract away and leave builders unable to control when it matters most.

Healthcare AI 2026 is genuinely powerful. It is also genuinely limited in ways that benchmark scores do not capture. Build with both of those things in mind.

The Regulatory Landscape: FDA 2026, EU MDR, and State Laws

Regulation in this space moved faster in late 2025 and early 2026 than most builders anticipated. If you deployed under 2024 guidance and have not reviewed your regulatory position since, you need to.

Here is what changed and what it means.

United States: FDA and HIPAA Updates

The FDA updated its Clinical Decision Support guidance in January 2026. The key distinction builders need to understand:

- AI tools that support clinical decision-making without replacing clinician judgment are generally not Software as a Medical Device

- AI tools that make autonomous clinical recommendations directly to patients generally are SaMD and require FDA clearance

The practical implication: if your AI chatbot patient apps surfaces a recommendation that a patient acts on without a clinician reviewing it first, you are likely in FDA SaMD AI territory. Get regulatory counsel before launch, not after.

On HIPAA, the 2025 Security Rule updates made two things mandatory that were previously optional. Encryption of all ePHI at rest and in transit, and multi-factor authentication for all systems accessing patient data. Voice conversation recordings containing patient health information are ePHI. That means every voice AI healthcare deployment needs end-to-end encryption and a signed Business Associate Agreement with every vendor touching that data.

Illinois: The State Law That Signals What is Coming Everywhere

Illinois enacted a law in August 2025 that prohibits AI systems from making independent therapeutic decisions or directly interacting with patients in therapeutic communication without licensed professional oversight.

This is the most specific state-level AI chatbot healthcare regulatory requirement yet passed in the US. California and New York are expected to follow. If you are building mental health AI, CBT-based chat interfaces, or anything resembling AI therapy, design for this requirement everywhere, not just in Illinois.

European Union: MDR Precedents Set in 2025

Ada Health achieved CE certification as a Class IIa medical device for diagnostic decision support. Infermedica earned MDR Class IIb certification, the first AI-powered clinical guidance platform to reach that level of EU regulatory approval.

These certifications set the precedent for what conversational AI patient app development compliance looks like in Europe. If you are building for EU markets, these are the benchmarks your regulatory path will be measured against.

The overall regulatory direction across all jurisdictions is consistent: more oversight, not less, specifically for AI tools that interact directly with patients on clinical matters.

Build your compliance architecture into the design from day one. Retrofitting it is significantly more expensive than getting it right at the start.

Accessibility and Equity: Voice AI for the Patients Who Need It Most

There is a version of this section that frames accessibility as a compliance obligation. That framing misses the point entirely.

The patients who most need better healthcare access are the same patients who benefit most from well-designed voice assistant healthcare elderly tools. Elderly patients who struggle with small-screen text interfaces. Patients with visual impairments or motor difficulties who cannot type. Patients with low health literacy who understand a conversation but cannot parse a medical intake form. Non-English speakers who have historically avoided digital health tools because every product assumed fluency they did not have.

Multilingual AI Patient Engagement

This feature is not a nice-to-have. It is the difference between a tool that serves your full patient population and one that serves only the patients who least need help navigating technology.

The Evidence On Elderly Engagement

The assumption that older patients cannot engage with digital health AI is a design failure, not an inherent patient limitation. A 2026 Frontiers in Digital Health study found 95% medication adherence among patients over 65 using a well-designed digital health tool with voice interaction. That number should put the assumption to rest permanently.

The Voice Design Detail Most Teams Overlook

Wolters Kluwer research found that patients trust AI voices that sound culturally familiar to them significantly more than voices that feel misaligned with their background. This is not an aesthetic preference. It is a clinical outcome determinant. A patient who trusts the voice is more likely to follow the guidance it delivers.

Practical Requirements for Accessible Voice AI

- Natural language input that does not require patients to conform to structured query formats

- Variable speech rate options for patients with processing difficulties

- Culturally inclusive voice personas tested with native speakers from target communities

- Multilingual AI patient engagement in the patient's actual native language, not just translated English

- Minimum 44x44 pixel touch targets for any accompanying visual interface elements

Design for the hardest-to-reach patient first. Everything built for that patient will work better for everyone else, too.

Implementation Framework: How to Build This Right

Every failed healthcare AI deployment we have looked at followed the same pattern. The team picked a technology and then looked for a problem to apply it to. Every successful one did the opposite.

Start with the patient's problem. Let the technology follow. Conversational AI is not a product decision; it is a healthcare digital transformation decision, and the organisations that treat it as such are the ones that build systems which actually improve clinical outcomes rather than just adding another interface layer.

Step 1: Define the Clinical Use Case Before Touching the Technology

Before a single line of code is written, the clinical problem needs to be clearly defined and documented. This sounds obvious, but it is consistently skipped.

The use case determines everything downstream:

- The accuracy requirements (triage AI needs near-perfect safety performance; scheduling AI does not)

- The regulatory classification (diagnostic AI is likely SaMD; scheduling AI is not)

- The escalation design (mental health AI needs immediate crisis pathways; appointment AI needs a different escalation entirely)

- The success metrics (medication adherence rate, no-show reduction, ED visit prevention)

If you cannot define what clinical problem you are solving and how you will measure whether you solved it, you are not ready to build yet.

Step 2: Match the Architecture to the Use Case

Not all conversational AI patient app development architectures suit all clinical problems. The right choice depends entirely on what the tool is being asked to do and what the consequences of a wrong answer are.

- Health education and post-visit instructions: RAG architecture grounded in validated clinical knowledge.

- Scheduling and administrative tasks: Rule-based scheduling logic with a conversational NLP wrapper. This is not a job for a general LLM.

- Clinical decision support in clinician workflows: Fine-tuned clinical LLM with mandatory human review.

- Mental health support: Validated CBT frameworks with LLM generation, licensed oversight, and human escalation as per Illinois law and expected federal guidance.

- Diagnostic AI: Full SaMD regulatory pathway before any patient-facing deployment.

Picking the wrong architecture for the clinical context does not just create a technical problem. It creates a patient safety problem.

Step 3: Design the Human Escalation Layer First, Not Last

This is the most common implementation failure in digital health conversational AI. Escalation gets treated as an afterthought, something added at the end because someone on the legal team asked about it.

It should be the first thing designed. Because it determines the architecture of everything else.

Define upfront: what triggers escalation, what response time is clinically appropriate for each trigger, which communication channel carries the alert, and who is accountable for responding. These are not product decisions. They are clinical decisions that happen to live inside a product.

Step 4: Build Compliance Into the Architecture, Not the Deployment

Compliance retrofitted at the end of development is always more expensive, more fragile, and more likely to have gaps than compliance built in from the start. HIPAA-compliant voice AI patient apps require:

- End-to-end encryption for all voice recordings containing PHI

- Signed BAA with every vendor processing patient voice data

- Immutable audit logs of all AI interactions involving health information

- MFA for all clinical staff accessing AI interaction data

- Explicit patient consent before any ambient recording begins

These are not features to add before launch. They are architectural decisions that affect every layer of the system. Retrofitting them is painful and expensive.

Step 5: Measure Clinical Outcomes, Not Engagement Metrics

Your AI patient engagement deployment is not successful because patients opened the app. It is successful because patients are healthier as a result. There is a meaningful difference between those two things, and it matters enormously when justifying the investment internally or to regulators.

The metrics that actually matter:

- Medication adherence rate at 30 and 90 days

- No-show rate reduction

- Post-visit care instruction comprehension

- Preventable ED visit rate for remotely monitored patients

These are harder to measure than session counts. They are also the only numbers that justify the investment and satisfy regulators when SaMD classification questions arise.

The Next Five Years: Where the Technology Is Going

Gartner's 2025 forecast puts it plainly: by 2027, nearly 75% of healthcare providers will deploy conversational AI healthcare 2026 solutions for patient-facing services. The question is not whether this technology reaches your organisation. It is whether you are positioned when it does.

Here is what the next 24 to 36 months actually look like.

Agentic AI: From Conversation To Action

The most significant near-term development is the move from AI that talks to AI that acts. Scheduling appointments, processing prescription refill requests, coordinating referrals, and updating care plans. For administrative actions, agentic voice AI healthcare will be production-ready within the next two to three years. Autonomous clinical ordering is further out and will require a different level of regulatory clearance before it reaches that stage.

Multimodal AI: Voice, Vision, And Clinical Context Together

MedGemma, released by Google DeepMind in 2025, processes medical text alongside chest X-rays, MRIs, and CT scans in a single model. The near-term patient app implication is significant. A patient will describe a symptom by voice, photograph a skin lesion or a medication label, and receive a response that considers both inputs. Dermatology triage, medication verification, and wound monitoring are the earliest viable use cases, likely within the 2026 to 2028 window.

Vocal Biomarkers As Routine Diagnostic Input

The infrastructure being built today for voice AI patient apps is also being built for something most builders have not fully considered yet. A patient's daily voice interaction with their health app is a longitudinal dataset. Pitch, rhythm, pauses, and spectral features change over time in ways that predict disease onset, treatment response, and relapse. Depression, heart failure, and cognitive decline. The monitoring is already happening as a byproduct of the conversation.

The Continuous Care Companion

All of these threads converge toward the same destination. A patient experience AI that knows the patient's history, monitors their condition between visits, communicates proactively in natural language, and routes to human clinicians with full context when clinical judgment is required.

The digital wellness platform trends influencing this space through 2030, AI personalisation, mental health integration, wearable convergence, and chronic disease management, make clear that this destination is closer than most builders currently plan for.

Mobisoft Infotech: Building AI-Native Patient Apps

Mobisoft AI health development sits at the intersection of clinical design, regulatory compliance, and production-grade AI engineering. We build voice AI patient apps, ambient AI clinical documentation integrations, LLM patient app assistants, and chronic care management tools with conversational AI at their core.

We have worked across the US, EU, Middle East, and Indian healthcare markets. We know what regulatory compliance looks like in each jurisdiction. And we know what actually works in production, not just in research papers.

What we build

- Conversational appointment scheduling via voice and chat

- LLM healthcare patient triage with clinical RAG architecture

- Ambient AI documentation integration with Epic and Cerner

- Multilingual AI patient engagement for diverse patient populations

- Medication management AI with reminder and refill workflows

- Mental health chat with a licensed human escalation

- Remote patient monitoring with voice AI triage check-ins

- Post-visit patient experience, AI education, and follow-up

Compliance infrastructure we bring

- HIPAA-compliant voice data architecture

- GDPR and DPDP Act compliance

- UAE PDPL and GCC regulatory alignment

- SaMD classification assessment

- FHIR R4 interoperability

- SOC 2 Type II security practices

If you are building a digital health conversational AI product and want a technical partner who has done this before, we should talk.

Frequently Asked Questions

What is conversational AI in healthcare, and how is it used in patient apps?

Conversational AI healthcare 2026 refers to AI systems that interact with patients through natural language rather than structured menus or static forms. In patient apps, it handles appointment scheduling, symptom triage, medication reminders, post-visit education, and remote monitoring check-ins. The global conversational AI in healthcare market stood at USD 13.68 billion in 2024, with patient engagement applications accounting for the largest single share.

How accurate are LLMs for medical advice in patient apps?

Tested alone, LLMs correctly identify medical conditions in 94.9% of cases. But a Nature Medicine 2026 randomised controlled trial found that when real patients used those same models, accuracy dropped to below 34.5%. That gap is an interaction design failure, not a model failure. LLM accuracy in healthcare apps depends on RAG architecture, confidence thresholds, and human escalation pathways to perform safely in the real world.

What is ambient AI, and how does it improve patient experience?

Ambient AI clinical documentation listens passively to clinical encounters and auto-generates structured notes, so clinicians are not typing mid-conversation. The UW Health NEJM AI trial found 30 minutes saved per provider per day alongside measurable burnout reduction. The patient never interacts with the tool directly. They just get a clinician who is actually present.

What are the regulatory requirements for AI in healthcare apps?

The FDA's January 2026 update clarified that AI making autonomous clinical recommendations directly to patients is generally FDA SaMD AI and requires clearance. The 2025 HIPAA updates made encryption of voice recordings containing PHI and MFA for all ePHI access mandatory. At the state level, Illinois now requires licensed professional oversight for any AI therapeutic communication, with similar AI chatbot healthcare regulatory laws expected from California and New York.

How do you build voice AI that works for elderly and disabled patients?

A 2026 Frontiers in Digital Health study found 95% medication adherence among patients over 65 using a well-designed voice assistant healthcare tool for the elderly. The assumed engagement ceiling for older patients is a design failure, not an inherent limitation. Accessible voice AI patient apps need natural language input, variable speech rates, culturally inclusive voice personas, and multilingual AI patient engagement in the patient's actual native language.

May 6, 2026

May 6, 2026