All of us know that all computing systems eventually fail. Even though computing has become much more reliable, still from time to time, systems suffer from operational failures either because of human mistakes, hardware/software failure or malicious hacking. In such situations, it’s of paramount importance to have regular backups of the data so that you can recover from any such failures. Here, we will discuss the backups in the context of the web and mobile applications.

Purpose Of Backups

Backups primarily serve 2 purposes:

- Failover In Case Of Operational Issues

For example, hardware/software failure of one of the machines on which the application runs. - To Restore Data In Case Of Disasters

The datacenter in which your servers run faces a natural disaster which leads to the data loss.

In another scenario, some hacker hacks the application and manages to delete the production data.

What Needs To Be Backed Up?

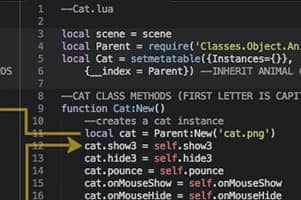

- Source Code Of The Application

Most of the development teams use a Source Code Version Control System such as Git or SVN. This automatically creates 2 copies of the source code. One on the developer’s local machine and another on the Source Code Version Control server.

For one more layer of extra redundancy, every time a production deployment happens, a copy of the entire project directory can be made which is copied to a remote location, such as Google Drive, Dropbox, etc. - Production Database

- Replication: For Operational Failover

All mature Databases support near real-time replication. In this case, all the changes that happen on the “master” DB server are replicated to one or more “slave” DB servers. So if in case master DB server goes down because of an operational failure, then one of the slave servers can be quickly promoted to master status to bring the application online.

Generally, slave servers are behind the master server only by a few seconds. So the data loss, in this case, is minimal. However, it should be noted that this type of setup can not protect against programming errors or accidental deletions, as the DB operations on the master are replicated on the slave servers also.

This also increases the cost of deployment as each of the slave servers needs the same hardware configuration as that of the master server. So if you have 1 master and 1 slave then the cost of deployment will be twice. - For Disaster Recovery Purpose

The production DB should be “dumped” to an archive which should be uploaded to a remote location, such as an S3 bucket. We generally set this to once every 24 hours. This can lead to data loss of max 24 hours in case of the database failure. The backup window is generally chosen around midnight when there is less load on the DB. Backing up all the data within a few minutes is a resource intensive task. Hence, during the backup, the performance of the DB is hampered to a large degree. Hence this is not done multiple times a day.

If replication is setup then more frequent backups can be made from the slave servers. - User Generated Files

If the application allows the users to upload files, then these files also need to be backed up in the case of operational or disaster failure.

These days, all the clouds provide highly redundant storage services such as Amazon S3. So if files are uploaded to S3, then there is 99.999999999% durability and 99.99% availability of objects over a given year. So there is generally no need to make another copy to protect from data corruption. But you might want to make a copy to protect against malicious hacking or accidental deletion.

Protection Against Hacking

- Generally, for a project, a hosting account is taken from a single provider, for example, AWS cloud.

- If a hacker manages to get full access or partial access to AWS account credentials then he/she can delete the entire data in your account.

- To protect against this, regular backups should be made in another datacenter from another provider.

- Naturally, this increases the cost as you need to pay for some compute and an equivalent amount of storage to another provider.

- The business should weigh in a remote chance of losing all its data against the cost involved to backup all the data at least once every 24 hours in another data center.

Conclusion

So one might wonder, do I need to implement all of the above strategies to backup my application securely. In an ideal world yes. However, depending on how much revenue you are earning from your application, you might decide on which of the above strategies to use. As a bare necessity, every application must backup the database and user generated files, once every 24 hours to something like Amazon S3. This gives you a reasonable amount of security in case of a potential disaster. Then based on the business use case and costs involved you can determine whether you need standby DB servers and backups in another datacenter.

Author's Bio:

Pritam Barhate, with an experience of 14+ years in technology, heads Technology Innovation at Mobisoft Infotech. He has a rich experience in design and development. He has been a consultant for a variety of industries and startups. At Mobisoft Infotech, he primarily focuses on technology resources and develops the most advanced solutions.