The progression of first-day launches is exciting. Apple WWDC Event created the right buzz in bridging the technological gaps with the newest upgrades for their Apple products. Each upgrade will create a significant change toward building a feature-rich technology that can help both end-users and developers. Let’s take a look at what day 2 of the worldwide conference has to offer.

Highlights from Apple WWDC Event 2022 Day 2

Day 2 of the Apple WWDC Event has focused on integrating new releases and software updates with Apple products. It hosts a series of technical discussions and explanatory videos for iOS developers to understand and implement these new components for their next iOS projects.

Let’s start with the events and sessions of Day 2 for the Apple developer conference.

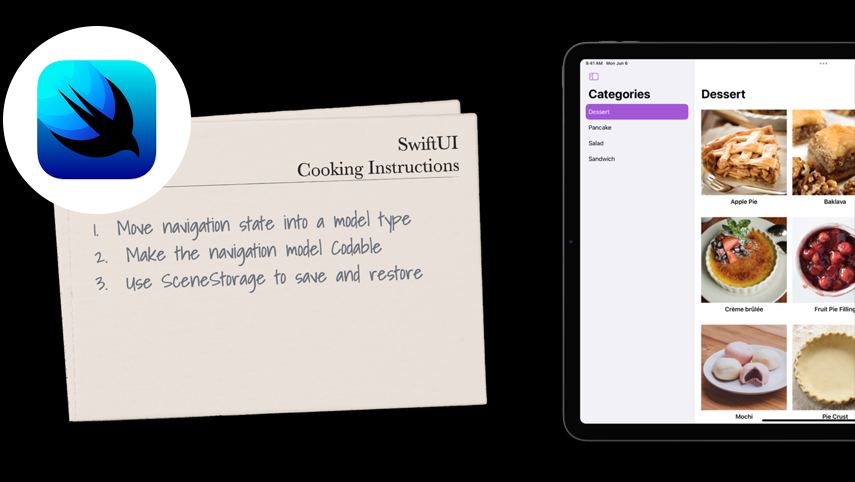

Swift UI Cookbook for Navigation

The right recipe for developing great mobile and web applications starts with a robust navigation framework. The SwiftUI team helps by providing a proverbial kitchen of code that can help to enhance the app experience for businesses. The SwiftUi’s navigation stack and the split view feature can help you link with specific areas of the iOS application and explore the navigational state at a much higher speed. The APIs in SwiftUI scale from the basic stacks in iPhone, Apple TV, Apple Watch, and other iOS devices to a more multicolumn presentation. This also creates a presence for the new navigation APIs, recipes for navigation, and persistent state.

Xcode 14

Xcode 14 is now the latest upgraded version for increasing productivity and performance. The SwiftUi with live previews works as an immersive canvas experience. The preview canvas is more interactive by default making the apps live as we make them. The view can be altered with the different Dynamic Type sizes. The Xcode 14 helps developers explore the enhancements, code completions, and navigation providing a preview of the performance improvements. This can be covered throughout the entire process of app development. The new Xcode 14 also allows developers to respond to feedback with the TestFlight that builds up into the app without leaving the Xcode.

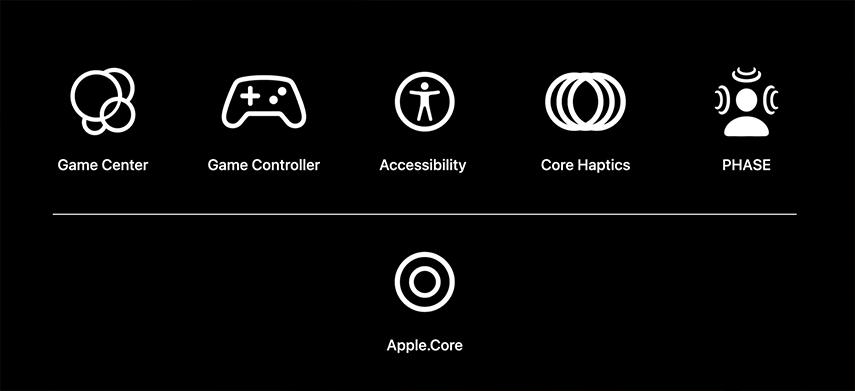

Integrating Accessibility Plug-in for Unity

The accessibility plug-in has been a necessary feature for Unity games. It enables developers to access games on every Apple device with the open-source Accessibility plug-in. Apple has added assistive technologies, including VoiceOver and Switch Control, for sampling Unity game projects. This adds value to the creation of a game and helps to identify the necessary labels. Interface accommodations ensure automation in scaling texts, known as the Dynamic Type support, which can identify reduced transparency and/or increase in contrast. The Apple technologies for Unity apps or games integrate six plug-ins, including Game Center, Apple.Core, Accessibility, Game Controller, PHASE, and Core Haptics. This captivates the mechanics of the new gameplays, making games more accessible for users and helping developers to tap into the new Apple update features.

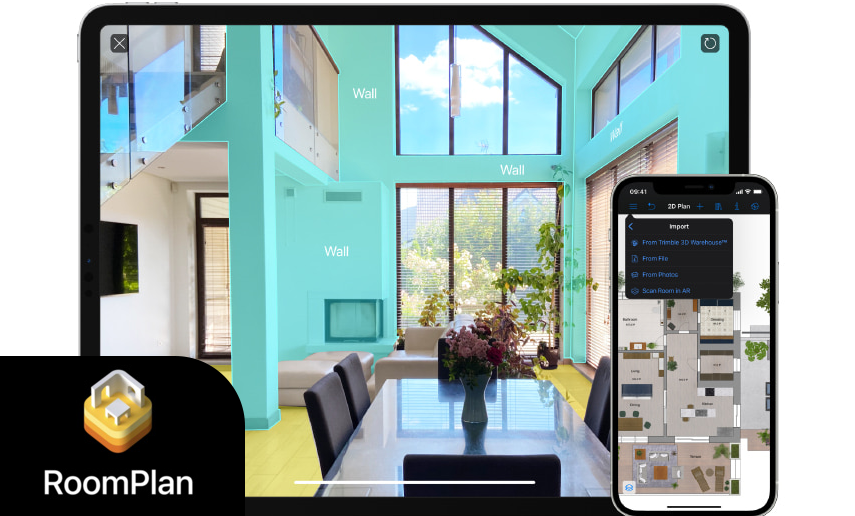

3D Room Scans with RoomPlan

Over the last decade, Apple has built a robust structure to bring powerful ways to transform the world with the ability to build apps. Previously released Screen Reconstruction API and Object Capture provide a coarse understanding of the geometric structure of a space. This enables the integration of augmented reality in the apps. RoomPlan executes the simplified 3D structuralization of a room and creates immersive navigation for the app. The RoomPlan API can simply add a room scanning experience by using sophisticated machine learning algorithms powered by ARKit. This API helps in adopting and exploring 3-dimensional parametric outputs and enhances the sharing of best practices that can integrate great results with every scan of a room.

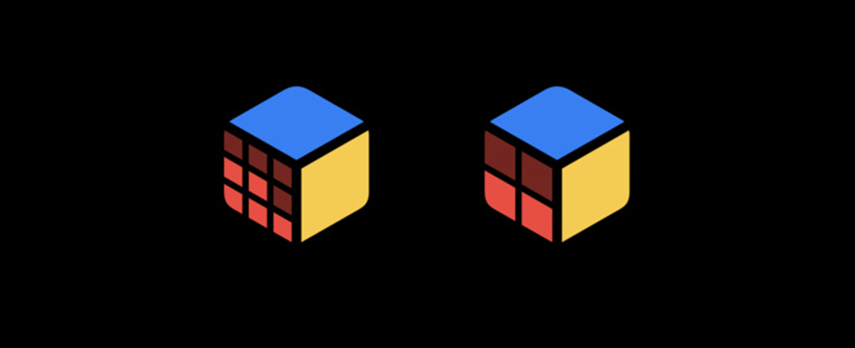

Variable Color to Make SF Symbols

The Apple software update in SF symbols has been an excellent introduction to expressive content creation. The system-provided symbols make use of the variable colors in providing the correct guidance and offering better practices for effectively utilizing the upgrade. Symbols 4 has various modes for rendering with effective signal strength. The automation allows developers to choose from the preferred rendering modes. Every signal is available in SF Symbols with a vast dynamic of Monochrome, Hierarchical, Palette, and Multicolor modes. These modes can enhance the expressiveness of variable color in SF symbols which can be well utilized by the developers when building an application.

New Push-to-Talk Framework for iOS

The Push-to-Talk framework enables a walkie-talkie experience for iOS-based applications. The key features enable developers to create new ways of audio communication apps on iOS. Push-to-Talk can be very significantly utilized in the fields of healthcare and emergency services. The Push-to-Talk provides APIs to utilize the system UI and allows access to the users. This allows the app to notify the system UI, hence enabling rapid communication with just a tap of the button. The Push-to-Talk framework lets developers configure the audio and communication applications to transmit and receive audio clearly. This requires a familiarity with handling the audio transmissions of an app’s backend.

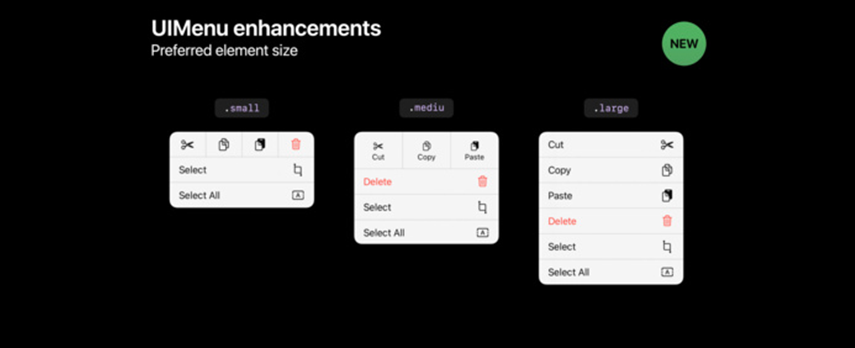

Desktop-Class Editing Interactions

The Desktop-class Editing Interaction feature helps developers to accelerate the productivity of an application. This offers insightful interactions that are in line with the UI and allows teams to quickly have access to the editing features for the application in progress. This new Apple update feature improves the iPadOS app experience similar to that on macOS with the integration of Mac Catalyst. This new system experience in iOS 16 is highly customizable and consistent with the UI system that will help developers create and users to find the right content on an app.

DriverKit with iPad

For this session in the developer conference hosted by Apple, the seamless connectivity of Thunderbolt and USB can be done with the new and improved DriverKit. It can help convert the existing drivers in Mac without changing any code and teach developers to add real-time audio with assistance from AudioDriverKit. This provides an in-depth understanding and experience when developing drivers specifically for iPad.

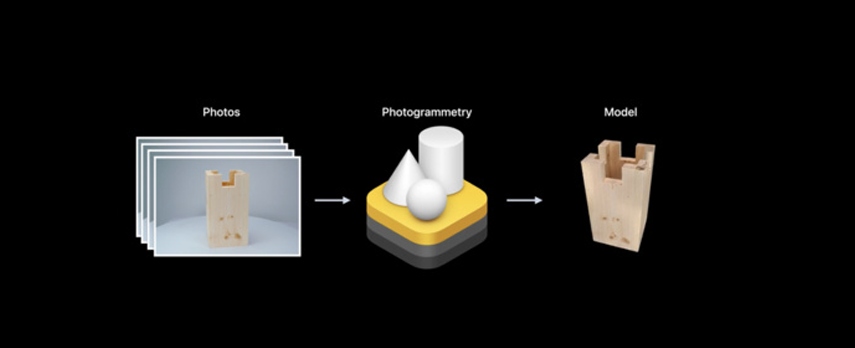

Augmented Reality

The Object Capture and RealityKit will continue to bring forth real-world objects in augmented reality games. Apple has dedicated the capturing of detailed objects using the Object Capture framework and then added it to the RealityKit project with assistance from Xcode. This offers to apply stylized shaders, animations, and more to enhance the AR experience for users. The objective here is to learn the best practices of ArKit, Object Capture, and RealityKit to get the most out of this new Apple update feature.

Wrapping Up

At the end of day 2 of the Apple WWDC Event 22, a list of interactive features, technological integrations, and application development features has been introduced. Each session has been explained in detail to better understand the newest features and how they can be implemented for the next Apple app development projects. Each of these features is essential for developers when creating an app for Apple-supported devices, and it is a goldmine for exploring newer ways to engage users and provide a captivating user experience. Stay tuned for more on the Apple WWDC event 2022.

If you missed the first day highlight, then check this out – Apple Keynote Highlights Day 1

Author's Bio:

Pritam Barhate, with an experience of 14+ years in technology, heads Technology Innovation at Mobisoft Infotech. He has a rich experience in design and development. He has been a consultant for a variety of industries and startups. At Mobisoft Infotech, he primarily focuses on technology resources and develops the most advanced solutions.