Who This Guide Is For and What You Will Get

This guide is for enterprise decision-makers who already know their legacy systems are a problem and now need to build a credible case for doing something about it.

By the end, you will have:

- A realistic picture of what your legacy system maintenance costs look like today. Not just the invoice, but the full number

- Five legacy system modernization strategies are compared honestly, with the ROI profile and risk level of each

- The AI-assisted development data that has changed the timeline and cost economics since 2024

- An ROI calculator framework you can populate with your own numbers

- A business case structure that works with finance teams, not against them

- The ten questions to ask any vendor before signing a modernisation contract

The Question Nobody Wants to Answer Honestly

Somewhere in your organisation right now, a system is running that was built before your most senior developers graduated from university. It does something important, critical in fact. Finance depends on it. Compliance depends on it. Revenue moves through it. And it is held together, at some level, by people who are getting closer to retirement every year and code that nobody fully understands anymore.

You know it needs to change. You've known it for years. So has the previous CTO, and the one before that. The reason nothing has happened isn't that leadership doesn't understand the problem. It's that nobody has made the financial case compelling enough to displace the other priorities competing for capital.

This guide is designed to fix that.

The argument for legacy application modernization has historically been made in IT terms: technical debt, security vulnerabilities, scalability constraints, and integration friction. These are real, but they are not how you win a budget from a CFO or a board. You win the budget with numbers, with a clear-eyed accounting of what the current state is actually costing the business, and what the transition to a modern system is credibly worth.

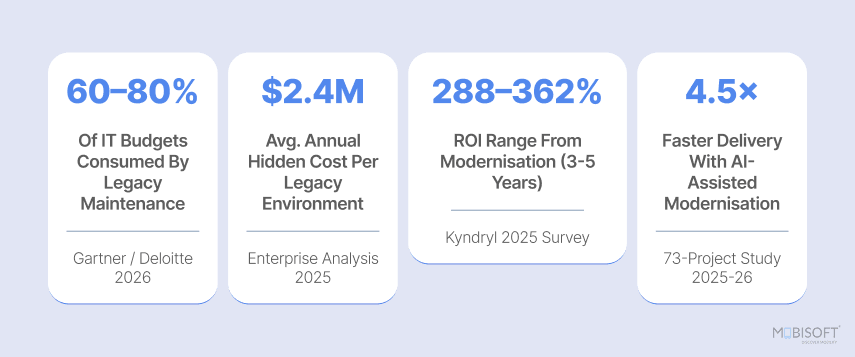

In 2026, that case is stronger than ever. AI-assisted development has fundamentally changed the cost and timeline mathematics of modernizing legacy systems. The legacy modernization ROI benchmarks from real enterprise projects completed in 2024–2025 are now documented. And the business cost of delay due to talent acquisition, security exposure, regulatory risk, and lost AI opportunity has compounded to a point where the financial argument for action is difficult to ignore.

That is a capital allocation decision, not a technology budget request. If you are currently weighing the ongoing cost of keeping legacy systems running against the investment of replacing them, our legacy software maintenance and support services provide a clear picture of structured maintenance engagement when modernisation is planned.

What Your Legacy Systems Are Actually Costing You

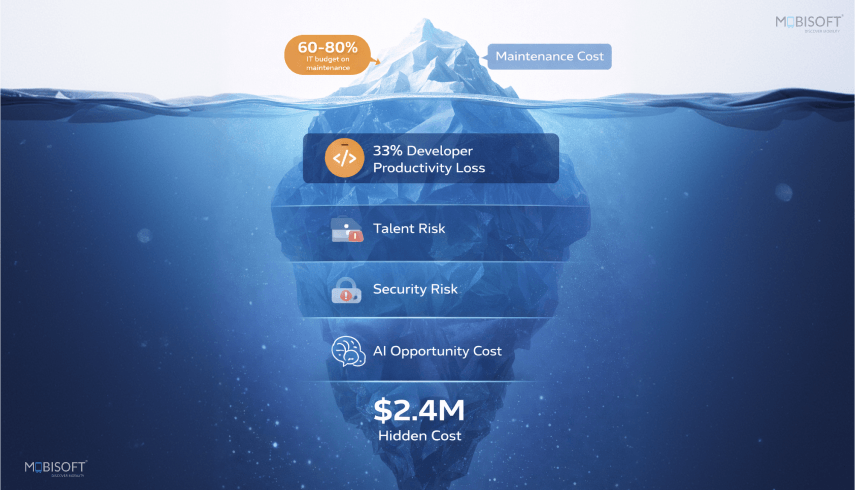

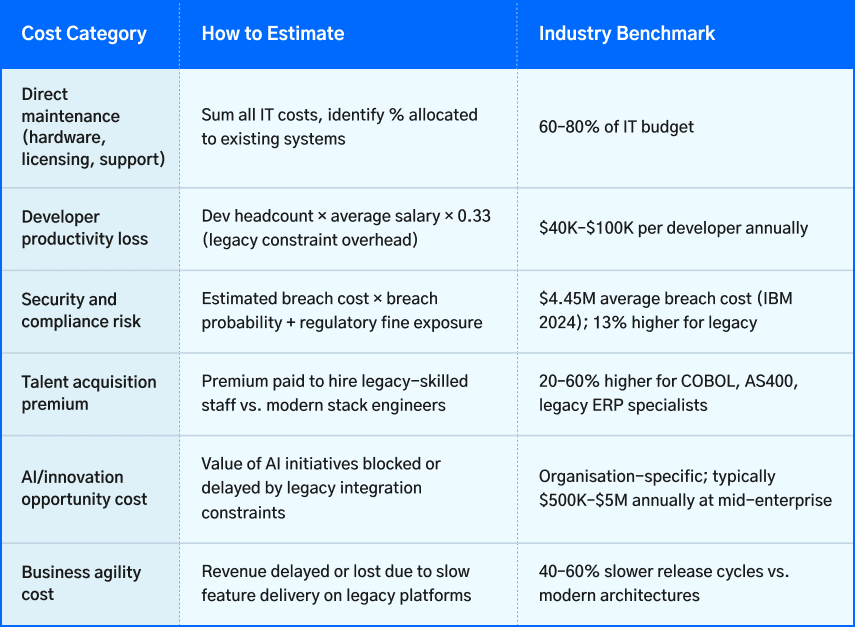

Before you can make the case for modernisation, you need the real number, not the maintenance invoice, but the total legacy modernization cost. Most organisations undercount this by 40 to 60% because the costs are distributed across budget lines that don't explicitly say 'legacy system.'

The Direct Maintenance Cost (The Visible Part)

Industry data from Gartner and Deloitte consistently shows enterprises allocating 60 to 80% of their IT budget to maintaining existing systems. For an organisation with a $10M IT budget, that means $6M to $8M per year keeping the lights on.

But here is what's worse: that ratio worsens every year without intervention. Legacy system maintenance costs increase 10 to 15% annually after warranty expiration. Premium support for end-of-life systems costs 50 to 200% more than standard support. The US government's ten legacy systems most in need of modernisation consume roughly $337 million annually. That is 80% of those agencies' IT budgets, according to a GAO report.

The compounding logic is brutal. A legacy environment that costs $2.4 million to maintain in year one will cost approximately $2.7 million in year two as technical debt accumulates. By year five, you're looking at $3.6 million annually for the same system doing the same things.

The Developer Productivity Cost (The Invisible Part)

This is where most organisations dramatically undercount.

McKinsey research shows that developers working with legacy applications are meaningfully less productive than those working on modern platforms. The Stripe Developer Coefficient report put a number on it: developers spend approximately 33% of their time compensating for legacy system performance issues. This includes managing workarounds, deciphering undocumented code, and building integration patches.

For an organisation with 25 developers earning $120,000 annually, that's roughly $990,000 in lost productivity every year. That figure doesn't appear on any invoice. It shows up as missed feature velocity, longer delivery timelines, and your best engineers choosing competitors who work on modern stacks.

The talent cost nobody models

The average COBOL programmer is now 55 years old. About 10% retire annually. Sixty per cent of COBOL-dependent organisations already cite finding skilled developers as their single biggest operational challenge (2025 survey data).

IBM's Cost of a Data Breach report found that organisations with extensive legacy infrastructure experienced breach costs measurably higher than those with modern systems. This is because legacy system security risks mean they can't be patched quickly, instrumented properly, or isolated effectively during an incident.

By 2027, the majority of remaining COBOL-era developers will have retired. The knowledge they carry doesn't transfer well. 42% of critical business logic in legacy systems is at risk when key personnel leave because ‘the system is the documentation' in most legacy environments.

The Opportunity Cost (The Strategic Part)

This is the cost that has grown fastest since 2024, and it's the one that finally moves boards.

Generative AI adoption did not just grow in 2025 and 2026; it accelerated dramatically. Enterprises are now deploying AI across customer service, predictive analytics, automated compliance, and process automation. That is the good news. The bad news is what organisations keep discovering once they get started. Their legacy infrastructure turns out to be the single biggest obstacle standing between them and any real AI value. It blocks data access, slows down integration, and makes continuous model training feel nearly impossible.

Outdated legacy systems were never designed for what AI requires. They run on batch processing and siloed databases, which worked fine for monthly reconciliations, but cannot support millisecond‑latency data access or continuous model training. The resource math alone is brutal. Legacy‑heavy organisations still spend roughly 75 to 85 percent of their IT budgets on maintenance, leaving maybe 15 to 25 percent for innovation, including AI. Modernised organisations have flipped that ratio completely: 30 percent on maintenance, 70 percent on innovation. That difference is significant, deciding the leaders and followers.

In practical terms, if your competitors are deploying AI-powered features, personalisation, and automation while you are managing a COBOL migration project, the gap is not just technical. It's commercial. And it widens every quarter.

The CFO conversation starter

Most modernisation conversations fail because they are presented as IT investment proposals. The conversation that works reframes it as a cost reduction and revenue enablement exercise:

"We are currently spending $X per year maintaining systems that slow down our AI strategy by Y months, expose us to Z% higher breach risk, and cost us $W in developer productivity losses annually. The total cost of legacy system modernization is $[X+W+estimated risk premium]. Modernisation over [N] months costs $[project cost], with documented returns of 200-300% over three years."

Five Modernisation Strategies and their Trade-offs

There isn’t a universally correct legacy system modernization strategy. Each approach has a different risk profile, cost structure, payback timeline, and suitability for different system types. Here are the five primary strategies, compared honestly.

Rehost (Lift and Shift)

Take the existing system and move it to a different infrastructure, usually from on‑premise servers to the cloud, while leaving the application code completely untouched. Explore our cloud migration services to understand what a structured cloud transition looks like at enterprise scale.

Pros:

- Execution is fast, often weeks to a few months.

- Technical risk stays low.

- Infrastructure costs typically drop by 20 to 40 percent right away.

- You do not need extensive application testing beyond validating the new environment.

Cons:

- Technical debt inside the code remains untouched.

- Performance and architectural improvements do not magically appear.

- AI or API integrations still require extra work.

- Cloud costs can climb higher than expected if you do not optimise.

Best for:

- Organisations under time pressure that need immediate cost savings

- As a first step in a longer phased plan.

- For systems with stable functionality that simply need cheaper operations.

Replatform (Lift, Tinker, and Shift)

Move to the cloud while making a few targeted improvements, but stop short of a full re‑architecture. You might switch from a proprietary database to a managed cloud service, containerise the application, or update the runtime environment.

Pros:

- You capture more cloud efficiency gains than a pure lift‑and‑shift

- Improve scalability and enjoy managed service advantages with moderate risk.

- It also moves faster than a complete rewrite.

Cons:

- Still carries forward existing architectural constraints

- Technical debt remains in the application layer

- Limited ability to support modern API and AI integration patterns.

Best for:

- Systems with solid business logic but legacy infrastructure dependencies

- Organisations are looking for a middle path between lift-and-shift and full modernisation.

Refactor / Re-architect

Keep the existing business logic but restructure the application architecture, breaking monoliths into microservices, separating concerns, introducing APIs, and modernising data models. The code is rewritten to modern patterns without changing what the business logic does.

Pros:

- Unlocks full cloud-native benefits

- Enables API-first integration for AI and third-party services

- Dramatically improves developer productivity post-modernisation

- Addresses technical debt at source, 40–60% faster release cycles post-completion.

Cons:

- Highest complexity, highest risk without careful execution

- Requires a deep understanding of existing business logic

- 18–36 month timelines for large systems.

- This is where most projects go over budget and timeline without experienced execution.

Best for:

- Core business systems (ERP, banking platforms, insurance engines) where the business logic has genuine long-term value but the architecture can no longer support modern integration or performance requirements.

Replace (Retire and Replace with SaaS)

Shut down the old system entirely. Bring in a modern SaaS product or an off‑the‑shelf platform that does the same job. No custom coding, instead, you just adopt what already exists.

Pros:

- For commodity functions, this gets you modern capabilities faster than any other method.

- Licensing costs are predictable.

- The vendor handles maintenance, security patches, and version updates.

- You also get immediate access to modern APIs and integrations.

Cons:

- You have to change your business processes to fit the platform.

- Data migration carries real risk.

- SaaS licensing fees add up year after year.

- You become dependent on the vendor.

- If your legacy system has heavy customisation, the off‑the‑shelf option may not fit well at all.

Best for:

- Systems that do commodity work, where your existing customisations do not add much business value.

- Think HR, expense management, basic CRM, or standard financial reporting.

Encapsulate (Strangler Fig / API Wrapper)

Do not replace the legacy system. Instead, wrap it with modern APIs. Those APIs expose the system's functionality to new applications. Then you replace one small module at a time, gradually. New applications call the wrapped APIs while the old system keeps running underneath. Over time, you retire each module.

Pros:

Disruption stays low.

- Business keeps running normally throughout the process.

- Risk builds gradually instead of hitting all at once.

- You can integrate with modern applications and AI systems immediately.

- No big‑bang cutover means no single point of failure.

Cons:

- The legacy system must stay alive during the entire transition, so maintenance costs continue.

- The full modernisation timeline stretches out significantly.

- You need a very careful design around API boundaries.

- This approach does not work for systems that are close to end‑of‑life or have serious viability concerns.

Best for:

- Mission‑critical systems where downtime is simply not an option.

- Firms that need immediate application modernization services while planning a longer‑term replacement.

- And for early‑stage planning, when the full scope is still unclear

Choosing the right strategy depends heavily on your system complexity, risk tolerance, and business goals. Explore our application modernization best practices for a deeper breakdown of how to match the right approach to your specific environment.

How AI-Assisted Development Changed the Economics of Modernisation

This section matters more in 2026 than it would have in 2023. The economics of legacy system modernization have changed significantly because of AI-assisted development tools. Any modernisation proposal that doesn't account for these capabilities is working from outdated assumptions.

What Changed and Why It Matters

It’s been understanding the old code: mapping what a 35-year-old COBOL application actually does, identifying the dependencies that aren't documented anywhere. Another change is discovering business rules that exist only in the logic of functions written by engineers who retired in 2014.

That discovery and documentation phase alone could consume the majority of a traditional modernisation project's budget before a single line of modern code was written. It required consultants who speak COBOL, who could read undocumented mainframe applications, and who understood legacy database schemas. A resource pool that was expensive, hard to find, and shrinking.

AI tools, such as Claude Code, GitHub Copilot agents, and IBM's WatsonX Code Assistant, have fundamentally changed this. They can map dependencies across thousands of lines of code automatically, document workflows that no living engineer remembers building, identify implicit coupling through shared files and global state, and surface technical debt before migration begins. The exploration phase that once took months of consultant time now takes days.

What AI does well in modernisation (and what it doesn't)

AI EXCELS AT:

- Automated codebase analysis and dependency mapping – understanding what the legacy system does

- Documentation generation for undocumented systems – producing specs from code that has none

- Code translation assistance – converting COBOL to Java, VB6 to C#, legacy SQL to modern ORM

- Test generation – creating equivalence tests that verify modern code produces identical outputs

- Refactoring suggestions – identifying patterns, anti-patterns, and modernisation opportunities

AI CANNOT REPLACE:

- Human judgment on business priorities and modernisation sequencing

- Domain expert knowledge about edge cases and regulatory requirements

- Architecture decisions for the target modern system

- Stakeholder alignment and change management

- Validation that business logic has been correctly preserved (humans must sign off)

Thoughtworks (2026): 'Modernisation is rarely about preserving the past in a new syntax. It's about aligning systems with current market demands. Even if AI were capable of highly reliable code translation, blind conversion would risk recreating the same system with the same limitations in another language.'

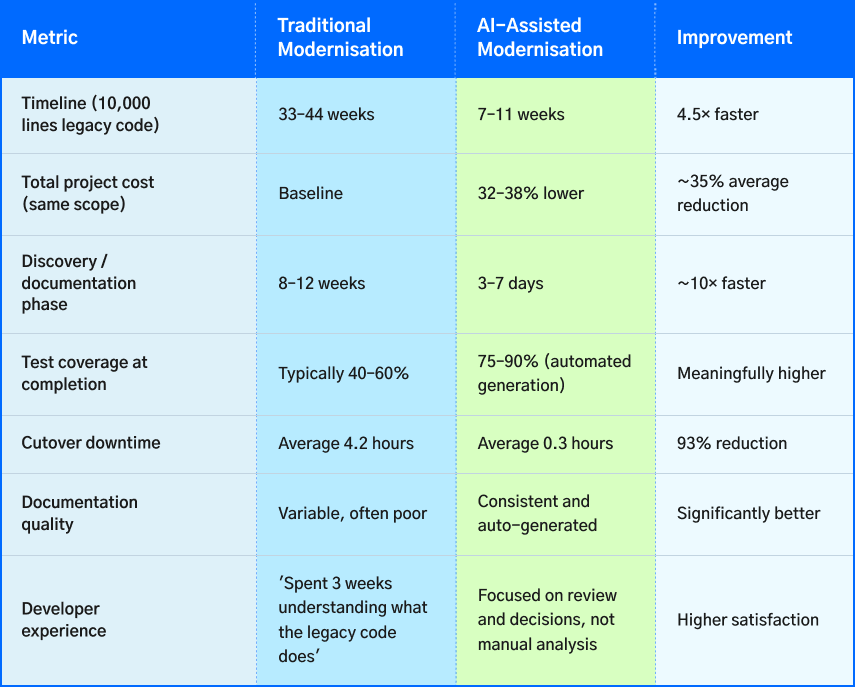

The Numbers: AI-Assisted vs. Traditional Modernisation

A structured review of 73 legacy application modernization projects completed in 2025–2026. 34 were reviewed using traditional methods, and 39 using AI-assisted tools. These numbers are changing how modernisation engagements are scoped and priced.

These numbers mean that legacy modernization ROI on projects that were previously borderline now has a credibly shorter payback window. A project that would have taken 18 months traditionally can be delivered in 5 to 7 months with AI tooling. The cost structure changes substantially.

The COBOL Modernisation Case Study

COBOL is the most dramatic example because the talent gap is most acute and the code base is the largest. An estimated 220 billion lines of COBOL run in active production today. It processes 95% of ATM transactions, runs the core systems of 70% of global banks, and powers significant government infrastructure.

Traditional COBOL modernisation for a mid-sized financial system costs $4 to $9 million and runs 18 to 36 months. The largest projects run significantly higher. These numbers kept most legacy software modernization initiatives on the roadmap indefinitely, always the next capital cycle's priority.

The average cost of a typical COBOL modernisation project dropped from $9.1 million in 2024 to $7.2 million in 2025, according to industry tracking. This is primarily due to AI tooling reducing the discovery and translation phases that consumed the majority of the project budget. That's a 21% application modernization cost reduction in a single year, with further improvement expected as tools mature.

Anthropic, March 2026 - Claude Code Modernisation Framework

"Legacy code modernisation stalled for years because understanding the code costs more than rewriting it. AI flips that equation. Claude Code automates the exploration and analysis phases that historically consumed the majority of total project effort, enabling teams to modernise systems in quarters instead of years."

In March 2026, Anthropic launched a Code Modernisation starter kit as part of its Claude Partner Network, describing legacy code modernisation as 'one of the highest-demand enterprise workloads' and the area where Claude's agentic coding capabilities 'most directly translate into client outcomes.'

The Right Way to Use AI in Your Modernisation Project

The worst outcome from the AI modernisation story is organisations expecting a one-click solution to a complex organisational change programme. The best outcome is using AI tooling to dramatically reduce the cost and time of the phases where human effort has historically been the highest-cost and least value-adding.

- Phase 1: AI-powered codebase analysis

Use Claude Code or equivalent to map your legacy system, extract business logic, identify dependencies, and generate documentation. This phase, which used to take months, now takes days or weeks. Do it before writing a single specification.

- Phase 2: Human-led modernisation planning

Take the AI-generated analysis and have your domain experts and architects make the decisions about what to retain, what to simplify, and what to replace. AI cannot make these decisions because it does not know your regulatory requirements, your technical debt priorities, or your business trajectory.

- Phase 3: AI-assisted translation and refactoring

Use AI for code translation, test generation, and refactoring suggestions. Use human engineers to validate, review, and handle the edge cases that require contextual judgment. The ratio of AI-generated to human-reviewed code should be high; the ratio of human-validated to unvalidated output should be 100%.

- Phase 4: Parallel validation

Run old and new systems side by side, validating that equivalence is maintained at each increment. AI can assist with equivalence testing; humans must sign off on business logic correctness, especially in regulated environments.

- Phase 5: Incremental cutover

Migrate users and data one module or service at a time. The strangler fig pattern, combined with AI-assisted test coverage, enables confident incremental cutover with a 0.3-hour average downtime vs. 4.2 hours for traditional big-bang migrations.

To understand how AI is reshaping the way teams plan and build software end-to-end, read our guide on AI-assisted software development and its impact on delivery timelines and project outcomes.

ROI Framework: Building the Business Case

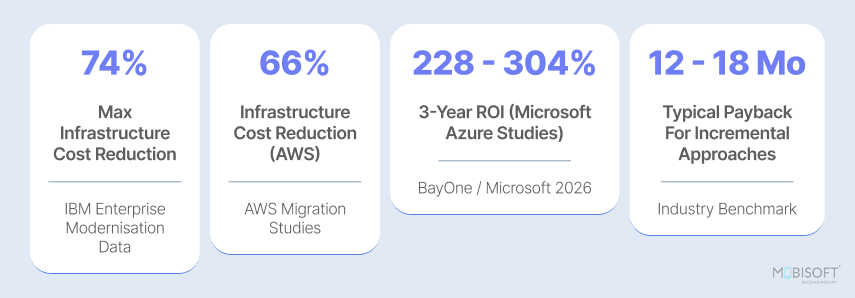

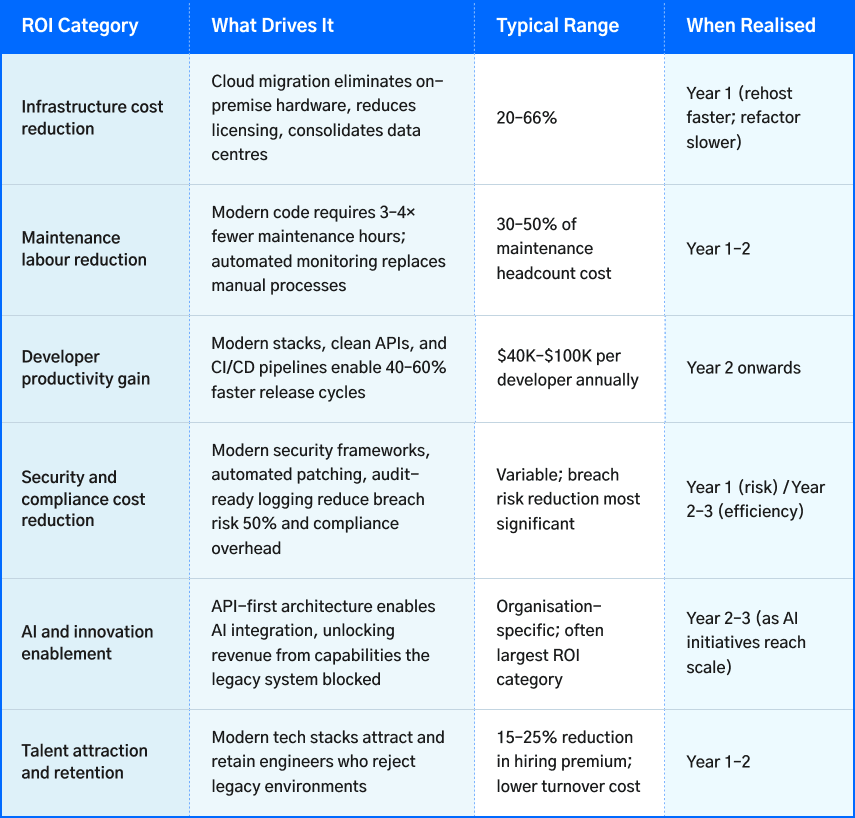

The ROI of digital transformation has a definitive answer in the 2026 data. What varies is the profile, which checks how quickly the returns materialise, and which categories of return are largest for a given organisation.

The Documented Return Benchmarks

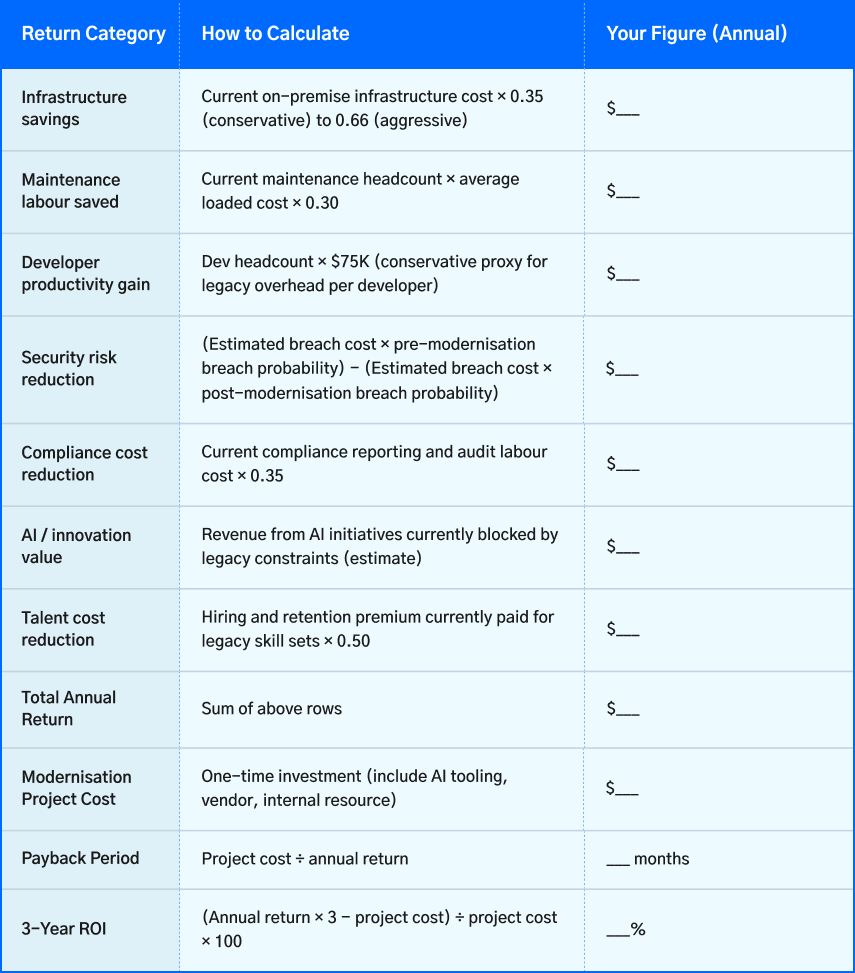

The ROI Calculator Framework

Use this framework to populate your own numbers. The categories below represent the standard return sources for an enterprise application modernization project. Complete each row with your organisation's specific figures, then compare against the project cost.

What the data says about payback periods

Incremental modernisation approaches (encapsulate, phased refactor) tend to reach positive ROI in 12 to 14 months, because returns begin in the first phase while later phases are still in progress.

Big-bang re-architecture and replacement projects typically show 18–36 month payback periods. It's because the investment is front-loaded and returns require the full system to be live.

With AI-assisted modernisation reducing timelines by 4.5×, the cost vs benefit of legacy modernization has changed completely. Projects that would previously take 18–24 months now often take 8–14 months. This changes the capital allocation calculus significantly.

For organisations evaluating how broader digital transformation services factor into the long-term ROI picture, this is where cloud readiness, process automation, and modernisation strategy intersect.

Industry-Specific Modernisation Context

Modernising legacy systems looks different across industries. The drivers, constraints, and ROI profiles vary significantly. Here is what matters most by sector.

Financial Services - The COBOL Reality

Over 70% of global banks still rely on legacy core banking systems, and 43% of global banking systems run on COBOL. The talent pool is the primary constraint: 60% of COBOL-dependent organisations can't fill open developer roles. The risks of legacy systems compound as the remaining talent ages out.

The ROI case in banking is strong: documented outcomes include 30-40% IT maintenance cost reduction, 50% faster time-to-market for new products, and 2.5x higher revenue growth at banks that have completed core modernisation.

The key constraint is regulatory continuity. Banking regulators require unbroken audit trails across migration. This is why the strangler fig/encapsulation approach is dominant in banking modernisation. It runs old and new systems in parallel with full data reconciliation. AI tooling is particularly valuable here because it can extract and document regulatory logic automatically, ensuring nothing is lost in translation.

Healthcare - Compliance First

Healthcare modernisation is driven by two converging forces: the pressure to enable AI for diagnostics and operational efficiency, and the regulatory requirement that systems handling patient data meet current security and compliance standards. Legacy EHR systems running on unsupported infrastructure carry significant HIPAA, GDPR (in EU), and equivalent national standard exposure.

A healthcare provider that modernised its EHR infrastructure to cloud with AI-enabled patient insights achieved a 50% reduction in IT maintenance costs at the 12-month checkpoint. It also passed the compliance audits, which were previously found to be challenging. The AI enablement was the business case; the compliance improvement was the risk case.

Manufacturing and Industrial - The OT/IT Convergence Problem

Manufacturing is dealing with a distinct version of the legacy problem of operational technology (OT) systems, consisting of SCADA, industrial control systems, and legacy PLCs. These were never designed to connect to modern IT networks and now must. The modernisation challenge isn't just software; it's the integration architecture between production-floor systems and enterprise IT.

The API encapsulation strategy is dominant here. Wrapping OT systems with modern interfaces that feed data to cloud-based analytics and AI platforms without requiring the replacement of operational hardware that has a 20-year lifespan.

Government and Public Sector - The $337M Problem

The ten federal US legacy systems most in need of modernisation cost $337 million annually to operate and maintain, consuming roughly 80% of those agencies' IT budgets. The political and procurement constraints make these systems harder to modernise than private-sector equivalents, but the financial case is unambiguous.

Government modernisation is increasingly using AI-assisted approaches precisely because of the talent shortage. Government agencies cannot compete for COBOL expertise at private-sector salaries. IT modernization services that reduce reliance on scarce legacy expertise are both a cost argument and a feasibility argument.

Modernisation Project Failures and Bypassing Them

The failure rate in legacy application modernization is sobering. Not because the technical challenges are intractable, but because most failures happen before a line of code is written.

The Five Most Common Failure Modes

Failure Mode 1: No executive sponsor who understands the full economic impact

Modernisation projects without a business-outcome sponsor lose budget priority the moment a competing initiative appears. The sponsor must be able to articulate the legacy modernization ROI in business terms, not IT terms.

Fix: Before the project starts, build the ROI framework described in Section 5. Get the CFO or a board member to co-own the business case.

Failure Mode 2: Attempting to modernise everything simultaneously

The business case for modernizing only looks clean on paper. When every system seems broken in its own way, and a big comprehensive overhaul feels more strategic than doing things piece by piece. But here is what actually happens. Big‑bang modernisation projects almost always run over budget and past their deadlines. Worse, they carry a level of business disruption that most organisations never fully prepare for.

Fix: Start with one system or one module and win on that. Capture the lessons to eventually expand. 80% of technical debt impact in a typical codebase derives from 20% of modules, so find that 20% first.

Failure Mode 3: Treating modernisation as purely a technology exercise

The most technically sound modernisation projects fail because they were planned by technology teams without adequate input from business, operations, legal, and compliance. Business logic that was encoded in outdated legacy systems decades ago, edge cases, regulatory handling, and customer behaviour accommodations are invisible to technology-only projects until something breaks in production.

Fix: Map business processes before writing specifications. Include domain experts in every discovery session. Let AI generate the first draft of the business logic documentation; let humans validate it before migration begins.

Failure Mode 4: Underestimating integration complexity

Legacy systems are never truly alone. They have grown connections to other systems over many years, often through custom integrations, file transfers, shared databases, and synchronous calls that no one bothered to document. Miss even one of those dependencies during modernisation, and you are looking at cascading failures in production. That is not theoretical. That happens regularly.

Fix: Require a complete integration map before any architecture decisions are made. AI-assisted dependency analysis is particularly effective here. It surfaces implicit coupling that human analysis misses.

Failure Mode 5: Change management as an afterthought

Then there is the human side. New systems bring new workflows, new training requirements, and new habits. You can deliver technically perfect software, but if the user base does not come along, you have succeeded as an engineering project and failed as a business transformation. This happens most often in organisations where staff have used the same system for fifteen years or more.

Fix: Invest in change management proportional to the workflow disruption. Plan user training and adoption programmes as first-class project workstreams, not a final phase that gets cut when the project runs over.

Organisations that approach modernisation as part of a broader capability build, not just a system swap, often leverage enterprise software development services to ensure the new architecture is built for scale and long-term maintainability from day one.

Building Your Modernisation Roadmap

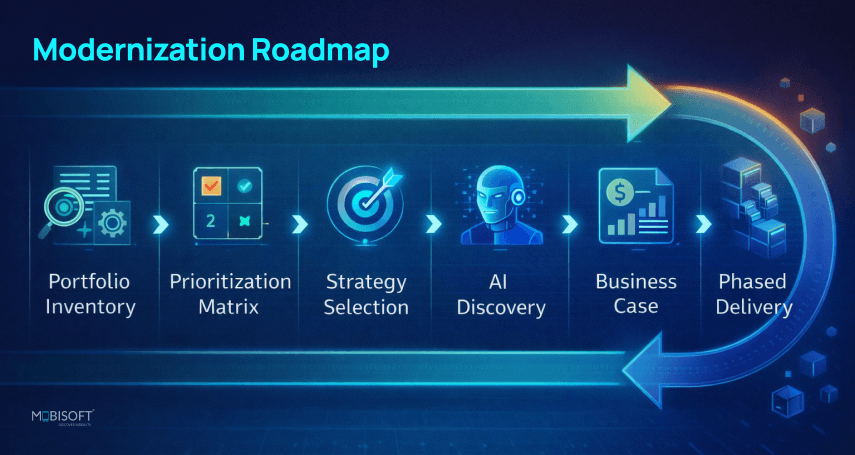

Here is a practical roadmap for moving from 'we know we need to modernise' to a funded, scoped initiative with a credible delivery plan.

Portfolio inventory

Start by building a complete inventory of every legacy system you have. For each one, note its business function, what it costs to maintain, which other systems it depends on, and where the real risks live. Those risks include things like talent concentration (what happens when that one expert leaves?), security posture, regulatory exposure, and whether the system can support any kind of AI work at all.

Prioritisation matrix

Now score each system using two questions. First, what is the business impact if this system fails or slows down? Think revenue, compliance, and competitive capability. Second, how urgent is legacy system modernization? That means looking at talent risk, security exposure, and whether the system is blocking innovation. Plot every system on a two‑by‑two grid. Choose the ones that land in the high‑urgency quadrant.

Strategy selection

Finally, pick a modernisation approach for each priority system. Match the legacy system modernization strategy to the system's complexity, your organisation's tolerance for risk, and the timeline you can actually support. Note: a conservative approach that actually finishes is always better than an ambition without proper resources. So choose what you can complete.

AI-assisted discovery

Before any vendor is selected or any specification is written, run an AI-powered codebase analysis on your priority systems. This produces the documentation, dependency maps, and business logic extracts that every subsequent project step depends on, which can take days instead of months.

Business case construction

Use the ROI framework in Section 5. Quantify the application modernization cost breakdown, the project cost with AI-assisted tooling reflected in the estimate, and the return timeline. Express in five-year NPV terms for board presentation.

Phased delivery planning

Design the project in increments of 8 to 16 weeks, each of which delivers demonstrable business value. Never propose a 36-month project with 0 business value until month 30. Early delivery phases build stakeholder confidence and provide evidence that validates the broader programme.

Vendor selection

Apply the evaluation criteria in Section 9. Pay particular attention to AI tooling integration, regulatory and compliance track record for your industry, and reference checks with comparable organisations.

As you build your roadmap, understanding how enterprise GenAI fits into the post-modernisation architecture is increasingly critical. See how leading organisations are approaching enterprise GenAI adoption to future-proof the systems you are building today.

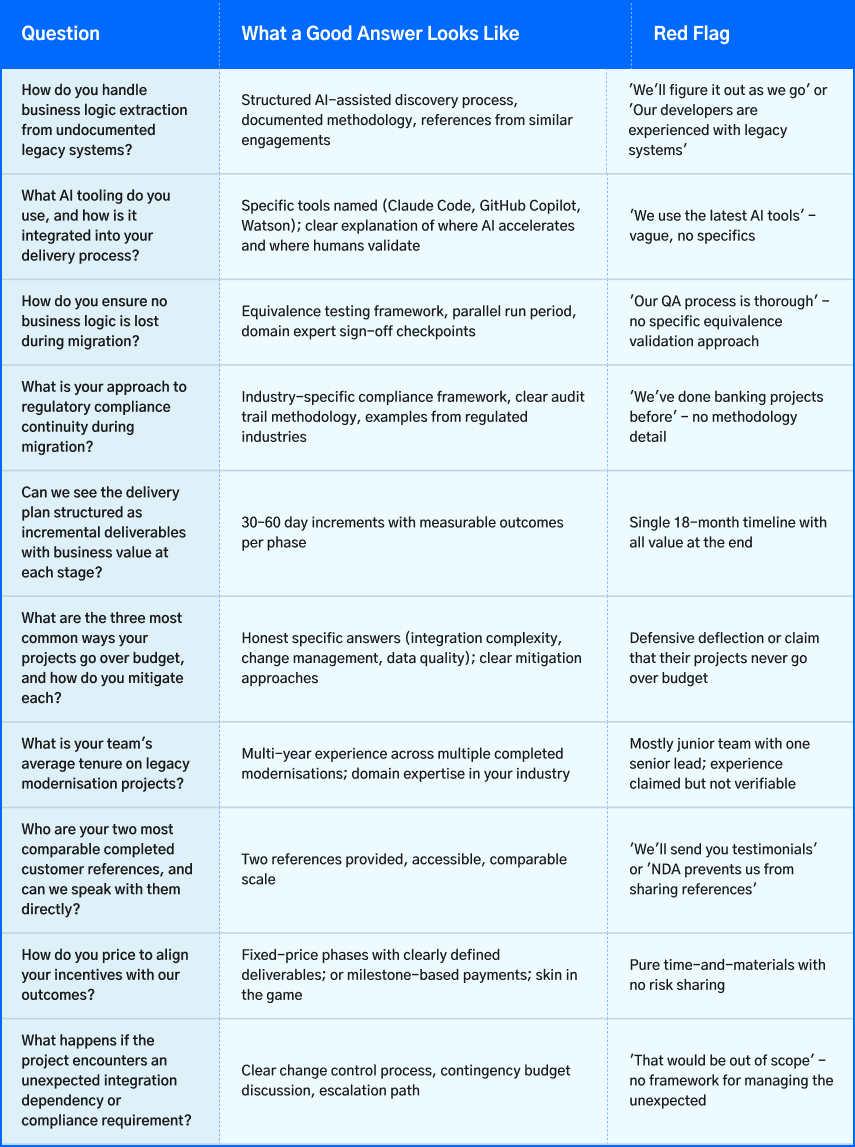

Ten Questions to Ask Any Modernisation Vendor Before Signing

The vendor market for legacy application modernization ranges from world-class to predatory. Here are the ten questions that separate capable vendors from those who will tell you whatever is required to close the deal.

How Mobisoft Approaches Legacy Modernisation

Mobisoft has been delivering legacy modernisation programmes for enterprise clients across financial services, healthcare, logistics, and industrial sectors for over a decade. Our application modernization services involve integrating AI tools to deliver faster, more accurate, and more cost-effective outcomes than traditional delivery.

Our Delivery Philosophy

- AI-assisted from day one: We use Claude Code and GitHub Copilot integrated into our delivery workflow as standard. Not as an experiment, but as our default approach. Every engagement begins with AI-powered codebase analysis before any specification writing.

- Incremental value delivery: Every phase of every project is designed to deliver demonstrable business value. No 24-month programmes with value at the end. 8–12-week increments that you can see, test, and measure.

- Domain expertise stays yours: We bring architecture, AI tooling, and delivery management. Your domain experts and business users own the business logic validation. Knowledge transfer is continuous, not a final phase.

- Transparent cost structure: We scope projects based on AI-assisted discovery, not assumptions. The discovery phase happens before we quote the delivery phase, because the alternative is quoting from guesswork.

- Regulated-industry track record: We have delivered modernisation in environments with HIPAA, GDPR, SOC 2, and financial services regulatory requirements. Compliance continuity is a first-class project concern, not a final audit.

Our Engagement Model

AI Discovery Sprint (2–4 weeks)

AI-assisted codebase analysis, dependency mapping, business logic extraction, risk assessment, and integration inventory. Deliverable: a comprehensive system documentation package and a prioritised modernisation roadmap. This phase is fixed-price and can be completed without committing to a full programme.

Strategy and Business Case (2 weeks)

Using the discovery output, we work with your team to select the right modernisation approach, scope the first delivery phase, and build the ROI model for the full programme. Deliverable: a funded business case and Phase 1 delivery plan.

Phased Delivery (8–12 week sprints)

Incremental modernisation in defined phases, each with a specific deliverable, business value, and measurement criteria. AI tooling accelerates translation, test generation, and documentation at every stage.

Parallel Validation and Cutover

Old and new systems run in parallel with full equivalence validation before cutover. Downtime window target of under 1 hour for most enterprise systems.

Post-Modernisation Optimisation

The 90 days post-cutover is where the most AI/innovation work is enabled. We remain engaged through this period to ensure the new infrastructure is used to its full potential, and not just a like-for-like replacement.

Start With an AI Discovery Sprint - Zero Commitment

The highest-value first step for any organisation with significant legacy infrastructure is a 2-4 week AI-assisted discovery sprint. We analyse your system, produce the documentation and dependency maps that planning requires, and give you a scoped business case before you commit to anything else.

About Mobisoft Infotech

Mobisoft Infotech is a Pune-based with over 10 years of experience delivering digital transformation services and cloud migration programmes for clients across financial services, healthcare, logistics, and manufacturing. We build in AI-assisted development as standard, not as an add-on.

Frequently Asked Questions

How long does a typical legacy modernisation project take?

Timelines vary enormously by scope, approach, and organisation readiness. Rehosting a single system can take 4 to 12 weeks. A full refactor of a core enterprise system (ERP, banking core, claims platform) typically runs 18 to 36 months traditionally, and 6 to 12 months with AI-assisted delivery for moderately complex systems. Phased programmes running multiple systems over several years are common in large enterprises. The key variable is the AI tooling decision: projects that use AI for codebase analysis, documentation, and code translation deliver the same scope in approximately a quarter of the traditional timeline.

What does legacy modernisation typically cost?

There is no single number, which is a frustrating answer that reflects reality. The cost of legacy system modernization is driven by: system complexity (lines of code, number of integrations), modernization strategy (rehost is cheapest; full refactor is most expensive), team location (offshore teams reduce labour cost significantly), and AI tooling adoption. A realistic range for a mid-enterprise refactor of one major system is $100K to $2M. With AI-assisted tooling, the same scope costs 30 to 40% less. The more useful framing is TCO: compare the legacy system maintenance cost against the modernisation investment using the legacy modernization ROI calculation framework in Section 5.

Should we build our own team or use a vendor?

Most organisations use a hybrid: a vendor leads the project and provides architecture, AI tooling, and delivery management; internal teams provide domain expertise, business validation, and post-modernisation maintenance. A purely internal approach is viable if you already have the relevant modern stack expertise and the bandwidth, which most organisations maintaining legacy systems don't. A purely external vendor approach creates knowledge transfer risk post-delivery. The hybrid model transfers knowledge progressively throughout the engagement.

What is the minimum viable first step?

If budget and organisational readiness make a full legacy system upgrade programme premature, the minimum viable first step is AI-assisted codebase analysis and documentation. This costs a fraction of a full project, takes weeks rather than months, and produces the business logic documentation, dependency maps, and risk assessment that all future planning requires. It is the discovery phase detached from commitment to any subsequent action. Every organisation with significant legacy infrastructure should have this analysis done, regardless of whether they have a modernisation project scheduled.

How do we handle the talent gap if we don't have COBOL engineers?

This is now less of a barrier than it was in 2023. AI tools can read and analyse COBOL code without requiring a COBOL developer. The remaining human requirements are: domain experts who understand what the system does (not how it's written), modern stack engineers who can design and build the target architecture, and validation specialists who can confirm business logic equivalence. A competent AI-enabled modernisation partner can work with your domain knowledge and their modern stack expertise, without requiring you to find COBOL specialists.

What about security risks during migration?

Migration creates a temporary dual-system environment that requires careful security management. Both systems must be secured, access controls must be consistent, and data synchronisation must not create exploitable exposure. Legacy system security risks are managed through parallel-run architectures with isolated network segments and continuous security monitoring throughout the transition period. The post-modernisation security posture is significantly better than pre-modernisation in almost every case. Modern cloud infrastructure, automated patching, and modern security tooling substantially reduce the breach probability that legacy systems carry.

April 16, 2026

April 16, 2026