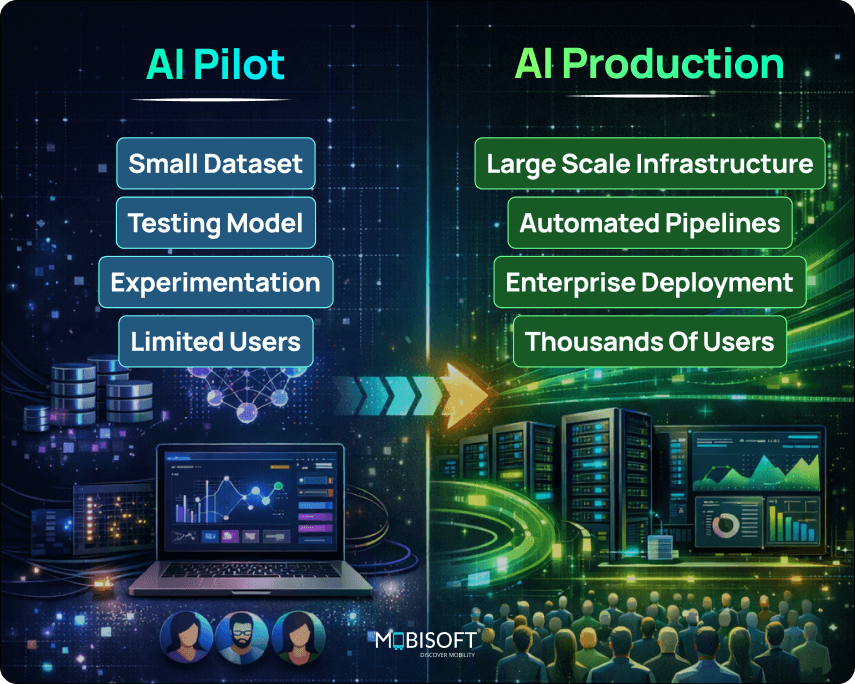

Enterprises face a peculiar dilemma in 2026. Widespread AI investment clashes with the reality of confined AI pilot project initiatives. Real organizational impact remains elusive because of strategy and execution.

Our focus here is distinct. We are not discussing how to build AI applications. Instead, we examine the more demanding human and operational challenge of scaling them. For enterprises succeeding with Claude, the model is only part of the story. Their real achievement lies in redesigning people, processes, and governance around it to enable reliable AI in production.

This is a breakdown of that seldom-discussed architecture. It explores what separates companies that scale from those that permanently stall. The difference is no longer technical. It is deeply organizational and central to long-term enterprise AI adoption.

Complement your AI rollout with our AI implementation strategy services for structured enterprise deployment.

Why Enterprise AI Pilots Stall?

Conventional analysis often points to technical limitations. The real obstacles in 2026 are far more human, especially when organizations attempt enterprise AI deployment beyond experimentation.

ROI Blind Spots

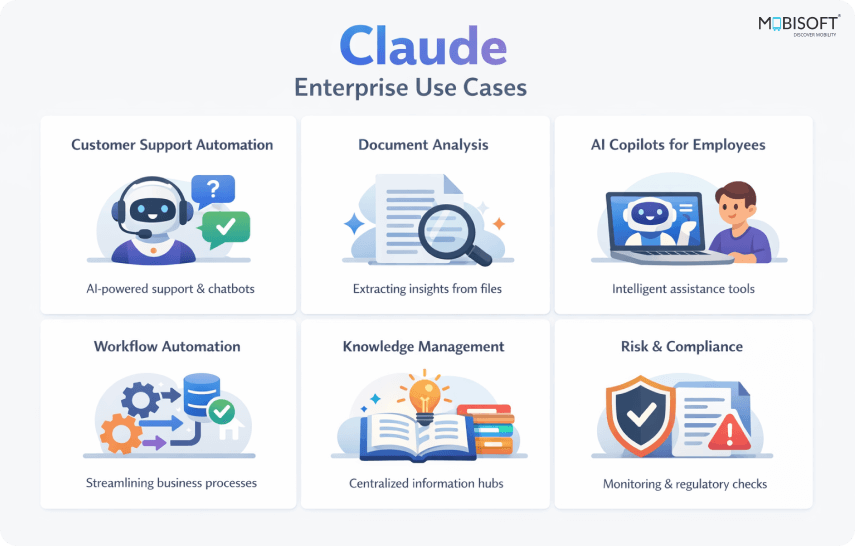

Companies frequently judge AI pilots using outdated metrics. They seek direct headcount reduction, a narrow lens that misses Claude's true value. This model excels at accelerating processes, elevating quality, and aiding complex decision-making. When these contributions are not measured, pilots appear to fail despite their clear benefits.

Data Readiness Crisis

A pilot can flourish in a controlled setting with curated data. It then confronts the chaotic reality of enterprise data environments. Most organizations still lack AI-ready data practices. When Claude encounters siloed, inconsistent, or poorly structured operational data, performance stumbles. The gap between clean test data and messy live data remains a fundamental barrier when deploying AI models to production.

The Deployment Illusion

Investment heavily favors technical development, with adoption treated as an afterthought. Leading practitioners now advise equal focus on both. Without deliberate design for how teams will use and trust Claude, even the most elegant solution gathers dust. This imbalance between creation and integration is a classic reason for stagnation in enterprise AI pilot projects.

Uncontrolled AI Usage

A striking number of employees use unsanctioned AI tools weekly. This fragmented activity creates security risks and undermines centralized scaling efforts. It reveals a clear demand that the official pilot has not yet met, allowing disjointed usage to proliferate.

The Governance Lag

While formal governance concerns have receded, the rise of autonomous, agentic workflows has introduced new urgency. Governing these dynamic systems requires a different approach than monitoring static tools. Claude’s Constitutional AI framework helps, but enterprises must still define their own oversight boundaries for these advanced applications.

Ultimately, scaling Claude is a cultural and organizational challenge. The technology is ready. Our business practices are catching up as companies attempt to scale AI in enterprise environments. Elevate your user experience with our AI-driven product experiences services.

How Scaling Enterprises Are Structuring Themselves

Successful enterprises no longer force AI into old frameworks. They redesign their operating model around it, with Claude often serving as the central intelligence layer within enterprise generative AI.

The Hub-and-Spoke Model (the dominant 2026 pattern)

- The dominant pattern is a hub-and-spoke design. A central AI hub sets strategy, maintains guardrails, and curates shared resources as part of a broader AI implementation strategy.

- Business unit spokes then own their specific initiatives and outcomes. This solves a critical problem: central teams cannot possibly meet every unit's unique demand.

- Claude thrives here as a unified, enterprise-wide model. Business units customize their application within governed parameters, ensuring consistency and reducing fragmented model management.

The Enterprise AI Studio

- This central hub often takes the form of an internal AI Studio. It is less a development shop and more a library of certified, reusable assets.

- Think of it as a curated repository for proven Claude prompts, workflow templates, and integration patterns.

- A marketing team can deploy a compliant copywriting agent in days, not months, by building atop this foundation. The Studio accelerates delivery while automatically enforcing quality and governance standards.

Human-Agent Coordinators

- AI Workforce Managers coordinate teams where Claude agents and humans collaborate. They assign tasks based on complexity, risk, and required judgment.

- Prompt & Workflow Engineers build and maintain the organization's library of conversational patterns and agentic sequences for Claude.

- Quality Stewards monitor output for alignment, bias, and compliance across all live deployments.

- AI Adoption Leads translate technical potential into business routine and manage the change curve for each department.

Winning organizations treat Claude AI adoption as a workforce redesign program. They are architecting a new way of operating, with Claude embedded in its core. Entry-level roles are being reconsidered as Claude handles routine cognitive work, allowing human talent to focus on higher-order analysis and creativity. The structure enables scale.

Explore our enterprise generative AI solutions to implement Claude across your business units.

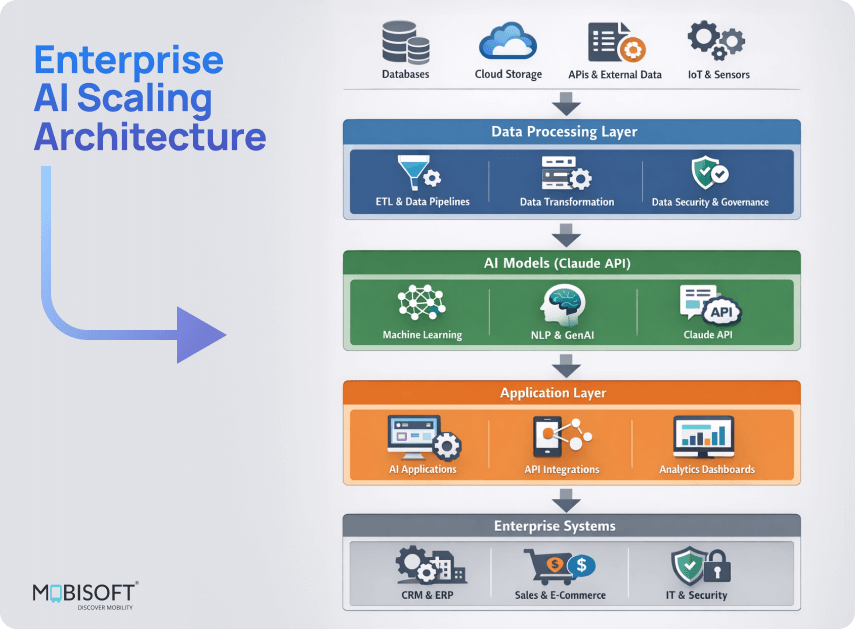

MCP as Enterprise AI Infrastructure

Anthropic invented and open-sourced the Model Context Protocol (MCP). In 2026, MCP is emerging as a universal enterprise standard for AI integration across systems and tools.

This protocol, used by Claude AI, is native to its reasoning capabilities. This results in more reliable, precise tool calls directly from the model.

The Compounding Effect

Before MCP, each new business unit demanded a new AI integration project. Now, a single, governed MCP server for a system like Salesforce becomes a reusable asset. Work compounds because the fifth deployment costs a fraction of the first, dramatically accelerating enterprise AI scaling.

The Hidden MCP Risk

This power creates a new vulnerability: credential sprawl and shadow MCP servers. Teams deploying ungoverned connectors can bypass security controls, creating significant risk. The tool designed for scale becomes a liability without proper AI governance.

Reframing MCP Strategy

Treating MCP as a casual tool builds a technical ceiling. But when treated as a centralized enterprise AI infrastructure, it becomes a powerful scaling multiplier. Organizations building this disciplined foundation now are engineering an unlimited runway for Claude AI adoption.

Discover Voice AI for enterprise workflows to enhance operational efficiency.

Workforce Readiness and Change Management

The toughest wall in scaling Claude AI in the enterprise is built by people, not technology. This human dimension is where most enterprises struggle silently.

AI Capability Deficit

- The primary obstacle to integration is a profound skills deficit, ranked first in nearly every 2026 industry survey.

- This gap is not about coding. It is about learning to partner with Claude as an agentic colleague.

- Necessary new skills include agent orchestration, workflow design, and ethical oversight of AI-generated outputs.

Training Without Adoption

- Standard training programs create awareness but rarely change daily work habits.

- Employees learn about AI tools in theory but return to legacy processes that have no place for Claude.

- Successful organizations redesign the work itself, embedding Claude AI workflows into core tasks and updating performance goals to require its use.

Adoption by Design

- Adoption design must occur alongside technical development, not after it.

- Involving employees in co-creating their own Claude workflows builds essential trust and ownership.

- Functional prototypes for user testing before rollout identify real friction points and build confidence.

- Incentive structures must visibly reward effective human and AI collaboration to motivate change.

- Clear communication about role evolution, not just job security, directly addresses team concerns.

Teams Powered by Claude

- The goal is a collaborative partnership, not a replacement. Claude manages high-volume cognitive tasks.

- This allows human teams to focus on strategic judgment, creative exploration, and complex relationship management.

- A new key performance indicator, human-AI collaboration effectiveness, measures the quality of this integrated partnership.

Learn how our enterprise AI solutions support seamless AI adoption across teams.

From AI Metrics to Business Value

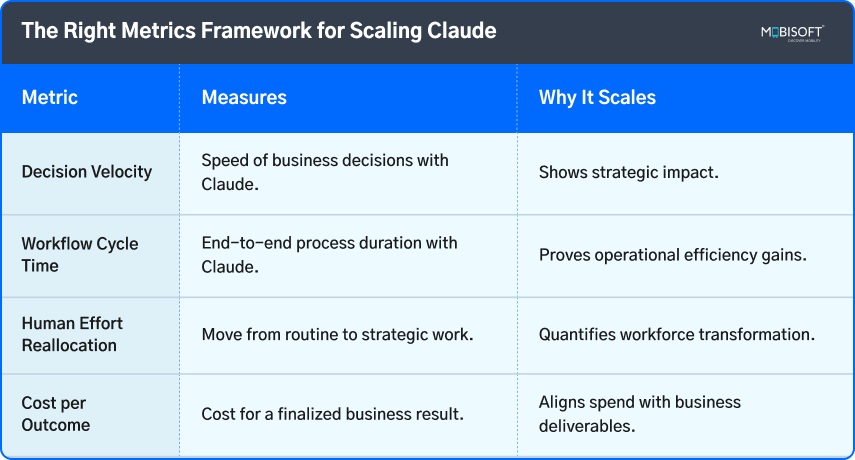

Many enterprises measure the wrong outcomes, which in itself prevents scaling enterprise AI initiatives. They track activity, not impact.

Activity vs Impact

Teams often report on AI-centric data like token usage or query volume. These metrics might illustrate workload, but they do not speak to business leaders. They fail to answer the fundamental question of value. When executives see reports filled with technical statistics but no clear connection to organizational goals, funding for Claude AI expansion stalls. This disconnect sustains pilot purgatory.

The Adaptation Dip

Informed organizations plan for a productivity J-curve. They communicate upfront that initial integration will cause a temporary dip as teams adapt and workflows are reconfigured. This expectation prevents the premature abandonment of enterprise AI scaling efforts during the natural adaptation phase. Following this period, performance accelerates, compounding in value as institutional knowledge and reusable Claude assets grow.

Compounding AI Returns

Claude’s value compounds as adoption deepens. Early deployments, such as tested prompts, workflow templates, and integration patterns, build the institutional assets. Each subsequent use case deploys faster and at lower cost, leveraging this shared foundation. The scaling journey itself, therefore, becomes progressively more efficient.

Accelerate your enterprise systems with our custom software development services

Turning Claude into Core Infrastructure

The window for tentative experimentation has closed. We now observe the emergence of a partnership in which human and artificial cognition are fused into a single operational system. Claude AI, with its native MCP architecture and constitutional framework, offers pre-built compliance and integration, forming the backbone of enterprise AI deployment.

Implement its inherent structure to standardize tool calls and audit trails, thereby turning governance and integration from development tasks into configuration tasks. The model's output is secondary to its function as a universal reasoning interface between your data and your operations, enabling AI in production at scale.

When you scale Claude, you are installing a nervous system. The imperative is to build on this substrate. Prioritize workflows where Claude's protocol-level advantages compound. This is how you convert a powerful model into permanent infrastructure, supporting enterprise generative AI and LLM deployment. Explore best practices in enterprise AI chatbot architecture for scaling AI interactions.

Key Takeaways

- Successful scaling in 2026 depends on your operating model, not your model choice.

- Claude's native MCP protocol turns custom integration work into reusable, governed AI infrastructure.

- Treat AI governance as a necessary enabling layer, not a restrictive policy checklist.

- Measure business outcomes like decision velocity, not AI activity like token count.

- Redesign job roles and incentives to require collaboration with Claude AI agents.

- Build a central AI Studio that curates certified components for business unit spokes, enabling enterprise AI adoption.

- Plan for a performance J-curve, communicating the adaptation dip before acceleration.

- Human–AI collaboration effectiveness is the new critical metric for augmented teams and supports scaling AI in the enterprise.

Frequently Asked Questions

What does a governed MCP infrastructure actually look like in practice?

It is a centralized registry of approved, secure connectors to systems like Salesforce or SAP. IT manages authentication and access controls here, preventing shadow MCP servers. This turns every integration into a reusable, compliant component, facilitating the deployment of AI models to production reliably.

How do we measure Human AI collaboration effectiveness concretely?

Track metrics like the reduction of human review cycles for Claude's output, the rate of workflow escalations from agent to human, and employee feedback on decision-support quality. It gauges the partnership’s fluency in enterprise AI pilot projects, not just output volume.

Doesn't the hub-and-spoke model create a new central bottleneck?

The hub must act as an enablement platform, not a gatekeeper. Its success is measured by how quickly it can equip spokes with certified components, templates, and guidelines, accelerating their independent delivery within guardrails, ensuring smooth scaling of AI in the enterprise.

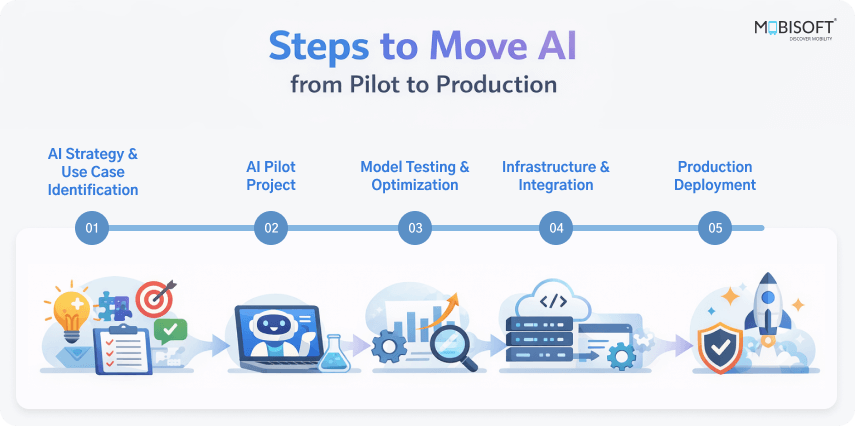

How do we retrofit Claude into core legacy workflows without a full rebuild?

Identify a single, high-frequency decision point within the legacy process. Use Claude's MCP connection to augment just that step, data retrieval, or draft generation. This creates immediate value and demonstrates the pattern for wider redesign, supporting how to move AI from pilot to production.

What is the most common mistake in defining human oversight boundaries?

Making the boundary too broad. Requiring human review for low-risk, high-volume tasks guarantees scaling will stall. Focus mandatory review on novel, high-stakes, or irreversible outputs, allowing autonomy in routine, structured domains, a critical part of enterprise AI adoption.

How do we prevent the AI Studio from becoming just another deprecated internal portal?

Its value is curation, not just collection. It must actively retire outdated components, highlight proven workflows with clear ROI case studies, and facilitate communities of practice among developers in the spokes, forming a foundation for generative AI in production.

March 10, 2026

March 10, 2026