The year 2026 has arrived with a clear, pressing directive for business leaders. The endless cycle of AI experimentation is over. Now, the only thing that matters is deployment into the daily rhythm of your enterprise within a mature Enterprise AI architecture. We are moving decisively from a world of intriguing pilots into the rigorous domain of AI operations.

Here, Claude finds its unique role within modern Claude AI architecture. It offers a foundation of enterprise trust, built through Constitutional AI and robust security. Its extended context capabilities handle real-world work, such as entire codebases or lengthy documents. Its design supports the agentic workflows that now define ambition in advanced Generative AI system architecture.

This article provides concrete, tested strategies. We will detail a complete framework for moving Claude applications from development into production, covering architecture, security, observability, and cost as part of a structured Claude AI production deployment strategy. The path forward is built not on hype, but on reliability. Let's begin.

For organizations looking to accelerate production adoption, explore our Artificial Intelligence Services to operationalize enterprise-grade AI systems with confidence.

AI in 2026: Production Is the Only Benchmark

Today, production readiness is a much broader demand. It's no longer just speed and availability. You now need reliable autonomous decisions, solid defenses against prompt injection, predictable costs, and comprehensive audit trails. Your systems must also degrade gracefully when challenged.

New Enterprise Requirements

The requirements have escalated. You are building agentic systems that manage workflows independently. This involves multi-agent collaboration for complex tasks. Handling vast contexts, like entire codebases, is routine in modern Large language model architecture environments. Observability must now evaluate decision quality, not just technical performance.

Why Prototypes Fail at Scale

Common development habits will undermine you. Hardcoded prompts fail against production's variability. Inadequate error handling causes silent failures, eroding trust. Unmonitored token usage leads to cost overruns. Security gaps invite prompt injection, and a lack of fallbacks creates single points of failure.

Building an AI Factory

Operationalizing Claude requires repeatable production discipline aligned with LLM production architecture best practices. Standardize prompt versioning, model routing rules, and effort policies across teams. Automate regression testing against refusal rates, token usage, and reasoning depth before release. Treat Haiku, Sonnet, and Opus as managed production assets within your broader Claude LLM architecture, with controlled rollouts and measurable performance baselines.

The Pressure and the Prize

Market tolerance has vanished. Scrutiny on AI ROI is intense, and compliance is mandatory. This harsh climate is your advantage. Organizations that build robust systems now will capture market share. Success depends not on the model alone, but on the production-grade system you wrap around it.

For enterprises defining long-term AI roadmaps and governance models, our AI Strategy Consulting services help align architecture, security, and business outcomes.

Designing Scalable Claude AI Architectures

Claude offers a model family, extended context capacity, and controllable reasoning depth. It introduces structural decisions that directly influence latency, traceability, and cost predictability at scale within modern Production AI systems architecture.

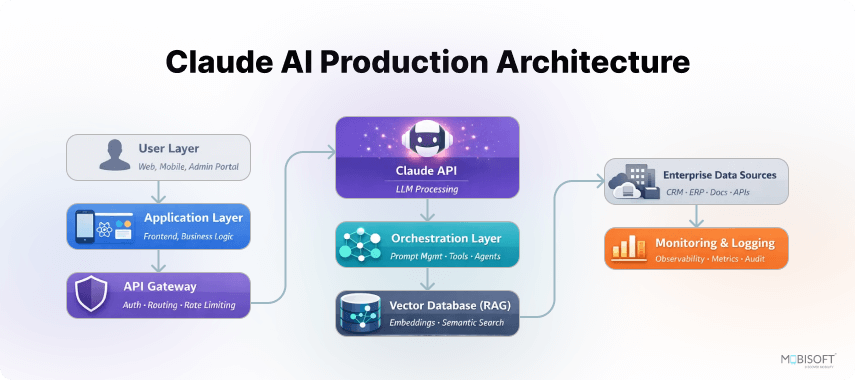

Layered Architecture Pattern

Structure your system in three clear layers. The Application Layer enforces business rules and validation. The Orchestration Layer routes requests across Haiku, Sonnet, and Opus using task-aware logic. The AI Layer manages Claude-specific controls such as version pinning, effort settings, and token accounting. This separation limits blast radius during upgrades and enables controlled experimentation.

Agentic Architecture Considerations

Claude’s large context window supports multi-agent workflows, but coordination must be explicit. Decide whether agents share a unified memory space or exchange structured summaries between steps. Assign effort levels deliberately across agents. Reserve deeper reasoning for critical decisions while keeping routine actions computationally light and cost-aware.

Prompt Engineering for Production

Claude responds predictably when prompts are structured and versioned. Store system instructions as configuration, not inline code. Inject dynamic context with clear boundaries between trusted instructions and user data. Track prompt variants against measurable outcomes. Claude’s consistency improves when templates remain disciplined and iteratively refined.

Error Handling and Resilience

Design for rate limits, refusal behavior, and reasoning escalation. Implement retries with exponential backoff and fallback routing between model tiers when latency spikes. If extended reasoning increases response time beyond thresholds, downgrade effort or switch models. Controlled degradation preserves reliability without fully interrupting user workflows and aligns with best practices for how to deploy Claude AI in production.

Data Flow Architecture

Capture token categories, including input, output, cached, and thinking tokens, at every interaction. Maintain source attribution for retrieved documents used within Claude’s context window. Durable logging enables audit readiness and hallucination tracing. Context compaction strategies should summarize stale dialogue while preserving decision-critical signals.

Integration Patterns

Choose integration paths intentionally. Direct Anthropic API access offers granular control over reasoning and version governance. AWS Bedrock provides centralized infrastructure management and enterprise controls suitable for Claude AI for enterprise applications. When enabling Claude’s computer use capability, enforce sandboxed execution and strict tool validation to contain operational risk.

If you're building scalable AI foundations beyond a single model, our generative AI services help enterprises design and deploy production-ready AI ecosystems.

Enterprise Security for Agent-Driven AI Systems

Emerging Risks in Agentic AI:

- Prompt injection is a clear and present danger within modern Claude AI security architecture. It requires built-in architectural defenses now.

- Agentic workflows create new risks, like tool misuse or multi-step exploits that target process logic in evolving Production AI systems architecture environments.

- Regulations are tightening fast. Compliance must cover real-time AI safety and bias monitoring, not just initial checks, reinforcing strong AI compliance and data security architecture.

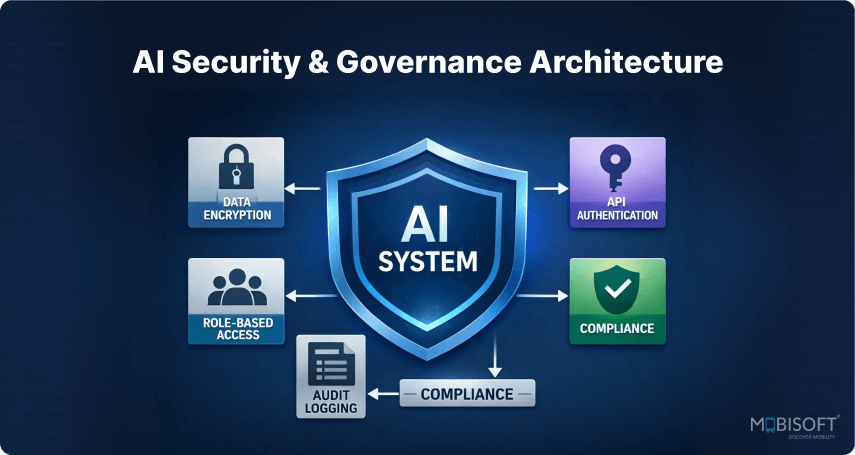

Multi-Layered Defense Strategy:

Input Layer Security:

- Scrub and validate all user inputs before they touch your system prompts as part of Secure AI architecture for enterprises.

- Verify user intent and enforce strict rate limits to block automated abuse.

- Maintain ironclad isolation between your trusted instructions and untrusted user data.

Processing Layer Security:

- Operate agents on a zero-trust principle within a governed Enterprise AI architecture. Give them the minimum access needed.

- Sandbox all tool execution, like code interpretation, in isolated environments.

- Validate every tool call before it runs, and watch for unusual agent behavior.

Output Layer Security:

- Output filtering to prevent sensitive data leakage.

- Content safety checks before returning responses to users.

- Bias detection monitoring across different user groups.

- Hallucination detection and confidence scoring.

Data Protection Measures:

- Encrypt everything, and use tokenization for sensitive data before it reaches an API in a secure Claude AI production deployment.

- Control access with role-based permissions, and secure your RAG knowledge sources.

- Use Claude's compliance tools for audit trails and govern deployments with central admin controls aligned with AI governance in production systems.

Enterprise Compliance Features (Claude-specific):

- Compliance API for real-time usage data and conversation logs through structured Claude API integration.

- Comprehensive audit trails for HIPAA, SOC 2, and GDPR compliance.

- Model versioning and deployment tracking within your Claude LLM architecture.

- Admin controls for organizational governance.

Emerging Best Practices:

- Consider third-party security layers for specialized threat detection within your Claude AI infrastructure.

- Regularly red-team your own AI agents to find weaknesses.

- Have a specific incident response plan for AI system failures.

To understand how enterprises structure context and memory at scale, read Context Engineering for LLMs: How Enterprises Build Reliable AI Agents at Scale.

Monitoring Decision Quality in AI Systems

Claude deployments in 2026 demand observability that reflects how the model reasons, not just how fast it responds. Decision quality must be measured against reasoning depth, refusal patterns, and context usage. Therefore, monitoring becomes a governance layer around Claude’s behavior in live enterprise workflows aligned with the best practices for LLM monitoring and observability.

Performance Observability:

- Track latency separately for inference, tool calls, and retrieval steps within Claude-powered flows built on a scalable AI inference pipeline architecture.

- Monitor effort level distribution across requests to understand when extended reasoning is triggered.

- Measure context window utilization to prevent silent token bloat affecting response time.

Reasoning & Consistency Signals:

- Analyze thinking token usage to detect over-escalation of reasoning on low-risk tasks.

- Monitor refusal rates and safety-triggered responses to identify policy friction.

- Evaluate output consistency across Haiku, Sonnet, and Opus for similar prompts within your broader Claude AI system design architecture.

Quality Observability:

- Score outputs against business KPIs, not surface fluency.

- Track hallucination incidence using source attribution logs from RAG workflows.

- Measure user acceptance and correction rates to identify drift over time.

Cost & Token Intelligence:

- Break down input, output, cached, and thinking tokens per feature or agent to support AI cost optimization architecture decisions.

- Correlate effort settings with cost spikes to refine routing policies.

- Set real-time budget alerts tied to specific Claude model tiers.

Active Runtime Controls:

- Use observability data to auto-adjust effort levels when latency exceeds thresholds, reinforcing resilient Production-ready AI system design.

- Roll back prompt versions when refusal or inconsistency rates rise.

- Trigger model routing changes dynamically if Opus usage exceeds defined cost envelopes as part of a structured Enterprise LLM deployment strategy.

For deeper insights into structured validation and performance benchmarking, explore LLM Evaluation for AI Agent Development.

Controlling Claude AI Costs at Scale

Model Routing Strategy

A thoughtful model routing strategy is your first lever for cost control within the scalable Claude AI architecture. Not every query requires the full capability of Claude Opus. Implement intelligent query classification to route simple, repetitive tasks to Claude Haiku. Reserve Sonnet for balanced needs and Opus for truly complex reasoning. This dynamic selection, based on context complexity and urgency, can reduce your model costs substantially without users noticing a difference in quality.

Token Efficiency Techniques

Direct token management is where daily savings are found. Proactively leverage features such as context compaction to keep token counts manageable in long conversations. Implement intelligent caching for frequent system prompts and common knowledge snippets. Deduplicate similar requests to avoid reprocessing identical content. These techniques shrink your context window footprint, directly lowering expenses and improving overall AI performance optimization in production.

Adaptive Thinking Controls

You can guide Claude's reasoning depth to align with cost. The effort parameter, which includes low, medium, high, and max, is a direct cost dial within a governed Claude AI enterprise implementation. For straightforward fact retrieval, lower effort may suffice. Reserve extended reasoning for high-stakes analysis or creative tasks. Classifying tasks upfront allows you to automatically set the appropriate effort level, balancing quality with fiscal responsibility.

Enterprise Budget Controls

Finally, establish firm governance. Implement per-team or per-project quotas to democratize access while preventing budget overruns. Use real-time cost alerts and automated circuit breakers that pause services if thresholds are breached. For enterprise pricing, engage with cloud platform partners like AWS Bedrock to explore reserved capacity options versus on-demand use, aligning commitment with your actual usage patterns for optimal value within a sustainable AI infrastructure for large enterprises.

What Production-Ready AI Looks Like in 2026

Your mandate for 2026 is moving your AI applications into reliable, daily production within a structured Production-ready AI system design. Remember, building trustworthy AI is not really about accessing the most advanced model. It is about constructing the resilient system that surrounds it within a mature Enterprise AI architecture.

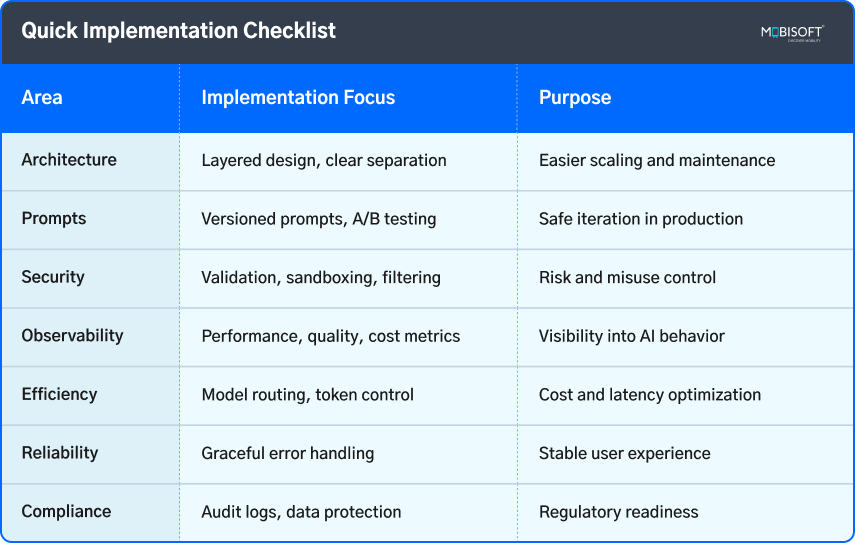

Your checklist is clear. Implement a layered architecture and version your prompts. Build multi-layered security with sandboxed tools. Establish observability that tracks decision quality, not just uptime. Route models intelligently and control token costs. Design error handling that degrades gracefully. Ensure every output is auditable.

Begin with a single, well-scoped use case. Apply these production disciplines from the very first day as part of a structured Claude AI production deployment. Build for reliability first, and capability second. Measure actual business outcomes, not just technical features. Iterate using insights from real production data.

The enterprises that will lead are not necessarily building the most sophisticated agents. They are building the most reliable systems. Your foundation starts now. If you're evaluating voice-driven AI automation within enterprise operations, read Voice AI for Enterprise Workflows: A Strategic 2026 Guide to extend your AI strategy further.

Key Takeaways

- In 2026, success depends on moving from AI pilots to robust, production-ready operations.

- Think of your AI as a system; its reliability is defined by the architecture you build around the model.

- Implement a layered architecture to isolate business logic, workflow orchestration, and AI interactions.

- Security must be multi-layered, defending against prompt injection and governing agent tool use.

- Modern observability must measure decision quality and agent behavior, not just traditional performance metrics.

- Control costs through intelligent model routing, token optimization, and strict budget governance.

- Design every workflow with graceful degradation and clear fallback mechanisms to maintain user trust.

- Your production infrastructure, not just your prompts, is what ultimately delivers enterprise value and compliance.

Frequently Asked Questions

What does graceful degradation actually look like in practice for an AI agent?

It means the system maintains core functionality. If a sub-agent fails, the workflow reroutes. When confidence is low, the agent flags its uncertainty or escalates to a human. The key is that the entire process doesn't just stop with an error.

How do we measure the ROI of a production AI system beyond cost savings?

Look at metrics it influences directly: reduction in process cycle time, improvement in decision accuracy, or increased user task completion. The goal is to tie agent performance to a key business outcome, like faster contract review or higher customer satisfaction scores.

Our team knows DevOps, not MLOps. Is this a major skills gap?

The principles are very aligned. Focus on the new requirements: prompt versioning, LLM-specific error handling, and cost telemetry. Your existing skills in infrastructure, monitoring, and CI/CD are the crucial foundation. You'll adapt to the new paradigms quickly.

Can we start with a cloud platform like Bedrock and switch to direct API later?

Yes, but design for portability. Abstract your AI client calls behind an internal service layer. This ensures your business logic and prompt templates aren't locked to a vendor's SDK, making future migration a configuration change rather than a rewrite.

How often should we retest or update our security prompts and guardrails?

Treat it like any security posture. Schedule quarterly reviews at a minimum. Also, update them for every major new feature or workflow you enable. Any security incident in your industry or a new Claude model release should also trigger an immediate review.

February 25, 2026

February 25, 2026