Research shows most mobile users churn quickly, with retention often dropping to single digits by 30 days and only a small minority remaining active after three months. That contrast is the paradox.

The issue is trust, predictability, and perceived control. AI can draft, analyze, reason across long documents, even manage multi-turn exchanges, but users hesitate when outputs feel inconsistent or opaque. Power alone does not secure success in AI-powered applications.

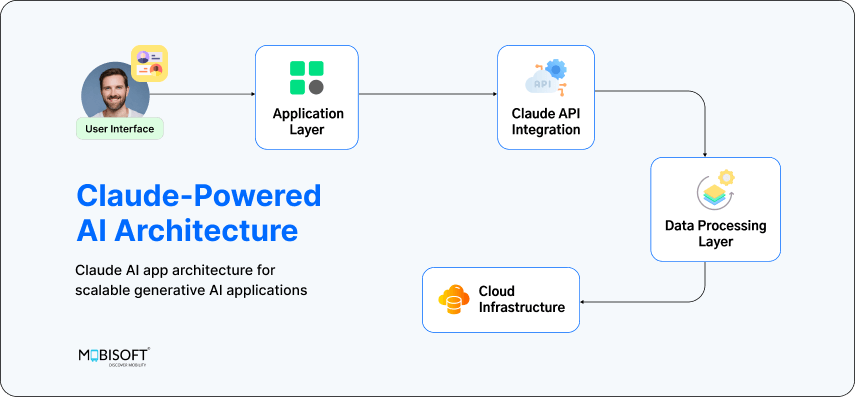

Building a Claude-powered product, therefore, demands a different discipline within generative AI app development. You are designing adoption patterns around uncertainty, expectation, and doubt. The apps that endure close the trust gap and break through the retention ceiling. From validating real user problems to engineering production reliability, the journey from prototype to scale in AI app development is more human than technical.

If you are evaluating implementation partners, explore our generative AI services to understand how production-ready AI systems are architected for real-world scale.

Reverse Engineering Success: Starting with User Jobs

Too many Claude apps begin with possibility instead of necessity. The result is impressive capability with shallow demand. Sustainable products start elsewhere, with a clear, painful job that users are already trying to get done.

Identify Real User Friction

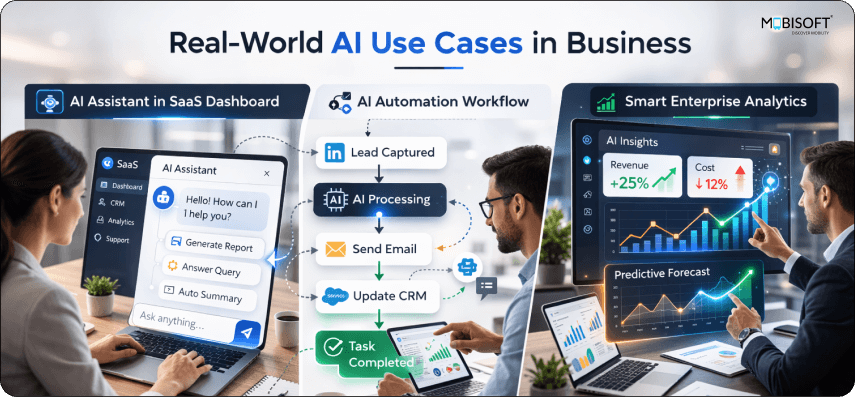

Instead of asking what Claude can accomplish, ask where users consistently struggle in their existing workflow and why current tools fail them. Some opportunities are AI native, such as synthesizing massive documents or maintaining multi-turn reasoning over extended projects, tasks that were previously unrealistic at scale in LLM application development. Others are AI augmented, where Claude removes a specific bottleneck inside a familiar process without replacing the whole system. That distinction is practical because it determines whether you are creating new value or merely accelerating an old routine.

Validate Demand Before Code

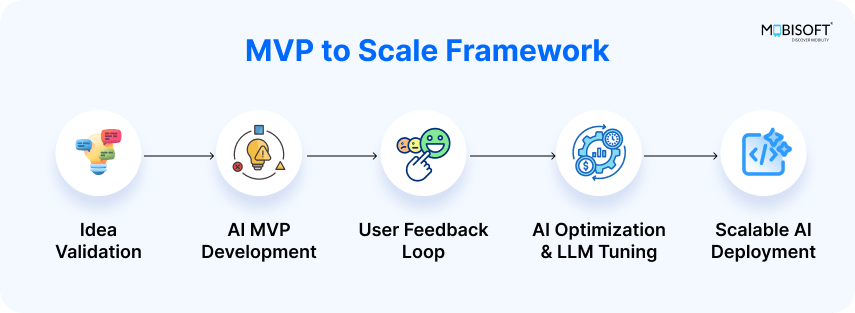

Before committing to production builds, simulate outcomes manually as part of disciplined MVP development. Wizard of Oz testing lets humans create output behind the scenes. This helps you gauge if users value the result itself, without automation. Watch real workflows. Notice where people pause or make quick fixes. These moments often show deeper problems than surveys can uncover. Just being accurate isn't enough when planning enterprise AI product development.

Clearly Defined AI Roles

Define the contract clearly: what is the user hiring Claude to accomplish, which alternatives are they replacing, and what new anxieties arise from delegating this task to AI? Build the smallest version that proves genuine demand for Claude in this role. This sits at the core of how to build AI apps from MVP to scale.

For teams formalizing roadmap alignment and governance, our AI strategy and consulting approach help translate experimentation into structured execution.

Building Trust Architecture: The Hidden System That Determines Adoption

A capable Claude app can still fail if users hesitate to rely on it. Features attract attention, but trust sustains usage. What determines adoption is the invisible system that manages expectations, control, and recovery when things go wrong.

Transparency Layer

Users want to understand how conclusions come about for important tasks. Give them reasoning summaries, confidence markers, and sources when it makes sense. Start with a short answer, then let users dig deeper if they want to. This cuts down on information overload but still lets people check things. Being upfront about what's uncertain, in honest terms, builds trust over time.

Control Layer

Intervention points build psychological safety. Let users edit suggestions before applying them, approve or reject outputs, and adjust parameters when context changes. Include reliable undo mechanisms for AI-driven actions. When people feel they can step in at any moment, they engage more willingly and experiment more confidently.

Consistency Layer

Claude's outputs can vary across sessions. Set expectations explicitly about when variation is expected and when stability should occur. Standardize formatting for recurring tasks, document acceptable output ranges, and communicate limits clearly. Predictability reduces support friction and reinforces confidence in long-term generative AI app development.

Fallback Layer

Failures will happen. Plan graceful degradation paths such as human review queues, alternative prompts, or clear “I don’t know” responses. Recovery design matters because a well-handled limitation often strengthens long-term trust when you build production-ready AI applications.

Understanding AI intelligence architecture and ownership considerations. clarifies how accountability and system design influence enterprise adoption.

Workflow Degradation at Scale

A prompt is static, but business processes are dynamic and expansive. As workflows grow in complexity, the initial instructions are diluted. They must compete with user requests, historical data, and new outputs. The clarity of the original design dissipates, leading to unpredictable behavior and reduced AI agent reliability.

Production Architecture: From Prototype Speed to Real-User Scale

A Claude prototype impresses quickly, especially during early MVP development. Production reality is less forgiving. Once thousands of users interact daily, variability, cost, and operational limits surface in ways early demos rarely reveal in real-world generative AI app development.

Core Production Challenges

- Manage latency carefully, since Claude responses vary between roughly 1.2 and 3.4 seconds, and user patience drops sharply beyond a few seconds of visible delay.

- Control token growth, because long prompts and complex workflows can escalate costs unpredictably as usage scales across real customers.

- Reduce output variability, as identical inputs producing different answers create confusion, increase support load, and erode perceived reliability in AI-powered applications.

- Design around rate limits to ensure API throttling does not interrupt workflows or cause unexplained failures during peak usage.

Proven Architecture Patterns

- Implement intelligent caching for common queries, balancing freshness with cost efficiency through selective invalidation rules within a scalable AI architecture.

- Configure model fallback chains, route complex reasoning to Opus, balance tasks to Sonnet, and speed-sensitive flows to Haiku automatically.

- Use asynchronous processing for long-running tasks, providing progress indicators so users remain informed and engaged.

- Optimize prompts systematically, refining templates to reduce token consumption without sacrificing output quality.

Monitoring and Testing

- Track token usage by feature, response time percentiles, hallucination patterns, and user satisfaction correlations continuously.

- Replace rigid unit tests with behavioral validation, output range checks, and defined acceptance thresholds suited to non-deterministic systems common in LLM application development.

For structured evaluation frameworks, review our guide on LLM evaluation for enterprise-ready AI development.

The Retention Equation: Why Users Stay (or Abandon) Claude Apps

Acquisition creates momentum, and retention determines growth. On Android globally in 2025, Day 1 retention around ~21 percent drops to around ~2 percent by Day 30, illustrating how quickly user engagement can decline in scaled AI app development.

Retention Killers

- Novelty cliff, where users try impressive features once, feel the initial spark, then return to familiar tools because daily utility was never embedded into their workflow.

- Inconsistency fatigue, where variable outputs gradually reduce confidence, making even strong results feel unreliable over repeated sessions.

- Value ceiling, where users assume they have seen the app’s limits and disengage before discovering deeper capabilities or advanced use cases.

Retention Drivers

- Trigger-based habits tied to real workflows, such as scheduled prompts or contextual nudges aligned with weekly reporting or planning cycles.

- Progressive feature discovery that introduces advanced capabilities only after users demonstrate comfort with core functionality.

- Personal context accumulation, including saved preferences, prior conversations, and structured projects that increase switching costs organically.

- Shareable business outputs, where high-quality outputs encourage users to distribute results, reinforcing value through visibility and peer validation.

Behavioral Reinforcement

- Use variable rewards, where occasional standout results create positive reinforcement without overwhelming expectations.

- Display visible progress indicators that reflect improved outputs or efficiency over time.

- Track failure patterns systematically so each session benefits from previous corrections and refinements.

For a deeper understanding of how structured inputs and memory design improve reliability, explore context engineering for LLMs and scalable AI agents.

Monetization Models That Match User Value Perception

Claude apps built through generative AI app development carry a different cost structure than traditional SaaS products. Compute varies by task, user behavior fluctuates, and value often feels intangible unless framed properly. Ensure that the pricing reflects how users experience outcomes.

Usage Aligned Tiers

Usage-based tiers work when they align model access with real value. A free tier powered by Haiku can support lightweight experimentation, while paid plans unlock Sonnet or Opus for deeper reasoning within advanced AI-powered applications. This structure connects higher capability with visible benefit, making upgrades feel earned rather than forced. Clear usage dashboards also reduce billing anxiety and improve trust.

Outcome Focused Pricing

Charging for completed results, such as reports generated or documents analyzed, resonates more clearly than per query billing. Users understand deliverables, but they rarely understand token counts. When pricing reflects tangible output, value perception stabilizes, and purchasing decisions accelerate.

Hybrid and Enterprise Models

Hybrid pricing combines a predictable subscription with overage for compute-intensive workflows, offering both stability and flexibility. For enterprise deployments, site licenses often outperform per-seat pricing because Claude usage varies widely across teams. Anchoring pricing to business outcomes, supported by transparent compute credits, strengthens credibility and long-term retention in enterprise AI product development.

End-to-end digital product engineering ensures pricing, architecture, and performance evolve together as the product scales.

Building Claude Apps Users Actually Keep Using

Most Claude apps fail because they are only built to demonstrate intelligence, especially during early MVP development. Real users operate inside deadlines, compliance rules, budget scrutiny, and internal politics. If your app does not perform reliably within those constraints, its intelligence becomes irrelevant.

Treat AI output as probabilistic infrastructure. That mindset changes how you design reviews, approvals, logging, and accountability. It also changes procurement conversations, because enterprises evaluate auditability and risk exposure as seriously as feature depth in scalable generative AI applications.

Invest early in usage analytics that connect model behavior to business metrics. Which outputs drive downstream action? Where does hesitation spike? What patterns correlate with churn? These signals often reveal more than qualitative feedback and are critical in LLM application development.

Claude apps that accumulate context across teams, preserve decision trails, and improve predictability over time become embedded in operations through a strong, scalable AI architecture. When a product reduces cognitive load while maintaining traceability, it earns longevity. That is where sustainable advantage is created.

To reduce early-stage risk and validate assumptions systematically, structured MVP development services help transform prototypes into market-ready foundations.

Key Takeaways

- Claude MVP speed means little without validated user demand and a clearly defined job embedded in real workflows, especially in AI startup MVP development.

- Trust architecture, including transparency, control, consistency, and recovery paths, directly influences adoption more than surface-level feature depth in AI-powered applications.

- Production scale introduces latency variability, token cost volatility, and output drift that require deliberate architectural planning within generative AI app development.

- Behavioral retention systems, such as progressive value discovery and context accumulation, determine whether users return consistently as products scale from prototype to production.

- Monetization must align pricing with perceived outcomes and compute intensity rather than abstract token consumption in AI app development.

- Enterprises evaluate reliability, auditability, and operational predictability alongside model intelligence before committing long-term to build production-ready AI applications.

Frequently Asked Questions

How do enterprises evaluate risk in Claude-powered applications?

Enterprises look beyond feature capability and assess audit trails, data handling policies, model predictability, and escalation paths when outputs fail. Security reviews often examine logging depth, prompt storage, access controls, and vendor dependency exposure. Without operational transparency, procurement cycles slow dramatically.

What metrics actually predict long-term retention for AI apps?

Surface-level engagement metrics rarely tell the full story. Teams should monitor repeat task completion rates, confidence feedback signals, time to accepted output, and downstream action triggers. When AI output consistently drives measurable business decisions, retention curves stabilize.

How should teams handle model updates without disrupting users?

Model upgrades can subtly alter tone, reasoning depth, and output consistency. Mature teams run parallel testing, compare behavioral baselines, and communicate changes proactively. Silent updates often create confusion, especially in regulated environments where output variance carries risk.

When does fine-tuning become necessary for Claude apps?

Fine-tuning becomes relevant when domain specificity, terminology precision, or structured output requirements exceed what prompt engineering can reliably deliver. However, teams should exhaust workflow design and context optimization first, since many performance gaps stem from poor task framing.

How can AI fatigue be reduced in professional environments?

AI fatigue often arises from overexposure and unclear value. Integrating Claude into defined workflow checkpoints, rather than constant assistance, reduces cognitive overload. Clear expectations about when the system engages help users maintain control and focus.

February 26, 2026

February 26, 2026